Why Your Discount Policy Lives in a VP's Head and a 2019 Deck

Most discount leakage is not a willpower failure. It is a policy that was never written down, then asked to scale. This paper walks through what to codify before an AI agent touches a single deal, using a composite industrial-IoT case to show the sequence.

The AI Discount Governance Agent: Codifying Policy Before You Automate It

Most discount leakage is a policy problem wearing a behavior costume. Reps are not the villains. The policy never existed in a form anyone could enforce, and now a faster team is asked to run it through an AI agent. This paper is about the work that happens before the agent ships.

TL;DR

- Codify the discount policy in plain language before any agent touches a deal. Four surfaces, not one: hardware tier, recurring tier, distributor override, multi-year.

- Treat the agent as an enforcement and scoring layer, not a policy author. Humans own the bands. The agent owns the gates.

- Design at least one escalation lane per commercial archetype you serve. Direct, channel, and strategic deals should not route the same way.

- Expect false positives. Budget for appeals. A governance surface that never says no to itself is not a governance surface.

- Run a three-person council on a weekly-to-monthly cadence. Keep the agenda narrow: exceptions, appeals, false positives, policy drift.

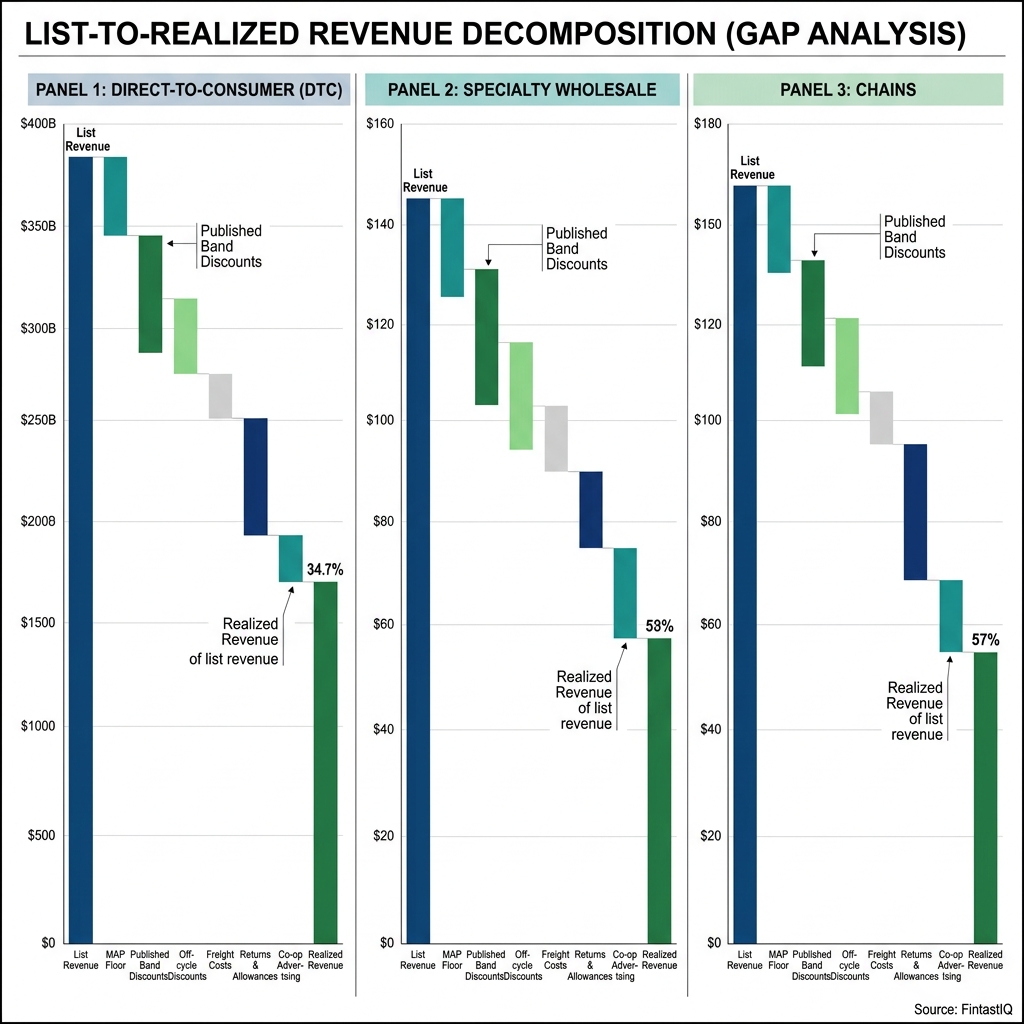

- Measure pocket margin, not headline margin. Discount governance that ignores freight, install, and allowance credits is theatrical.

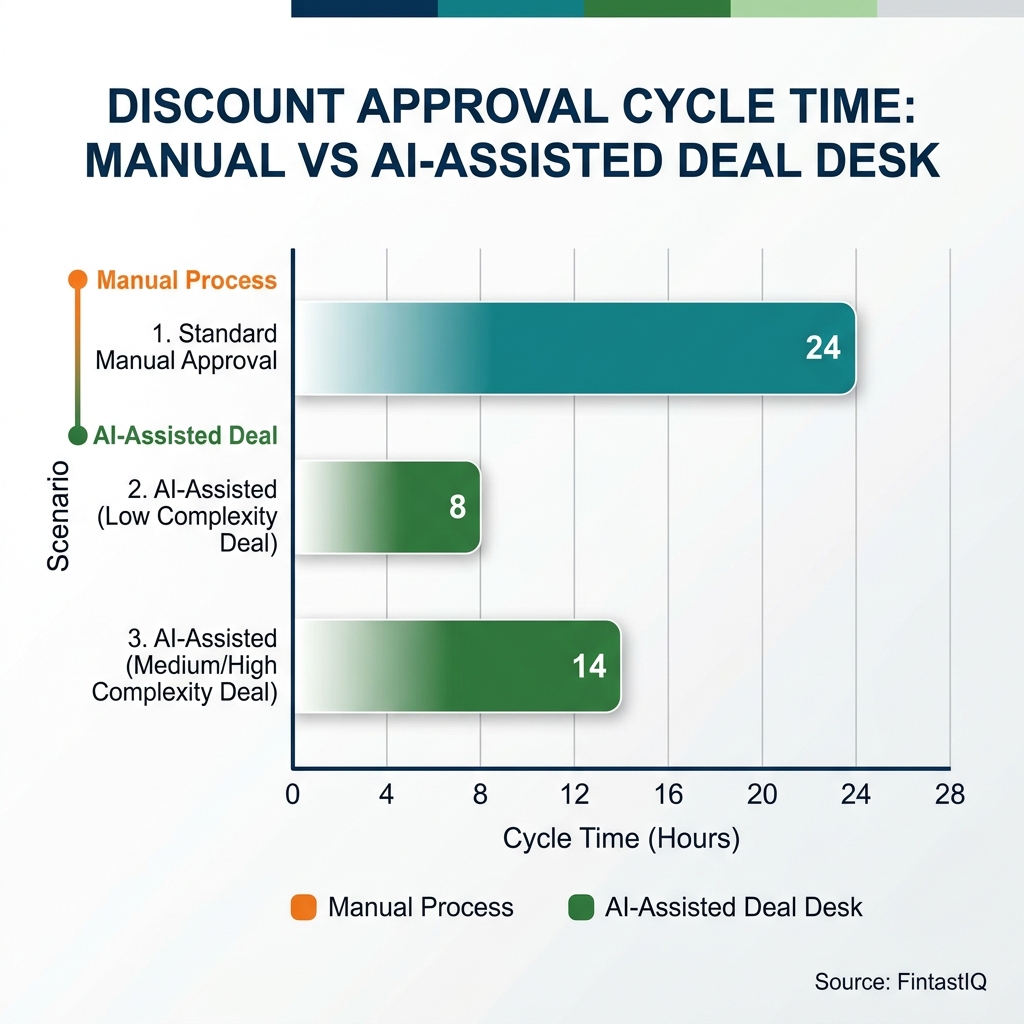

Exhibit: Discount approval cycle time

Exhibit: Discount approval cycle time

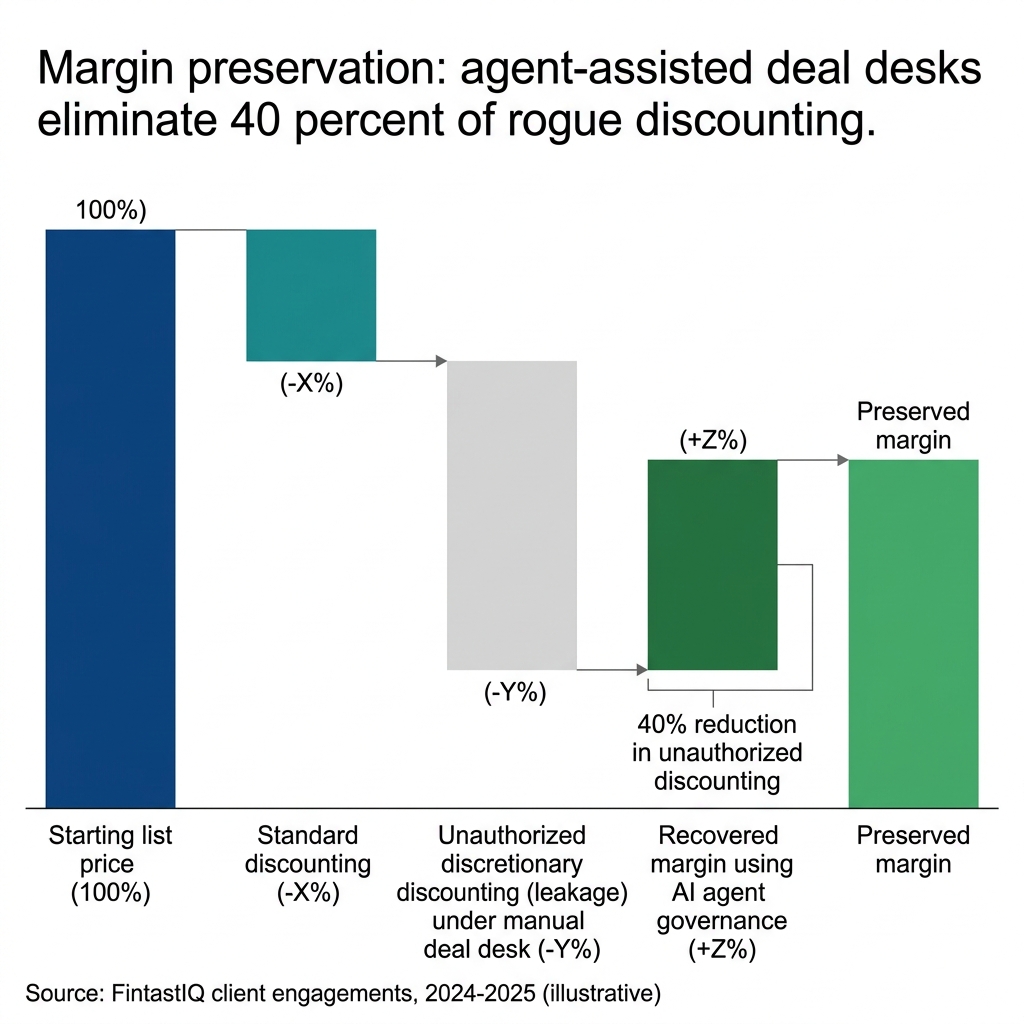

Exhibit: Margin leakage reduction via AI governance

Exhibit: Margin leakage reduction via AI governance

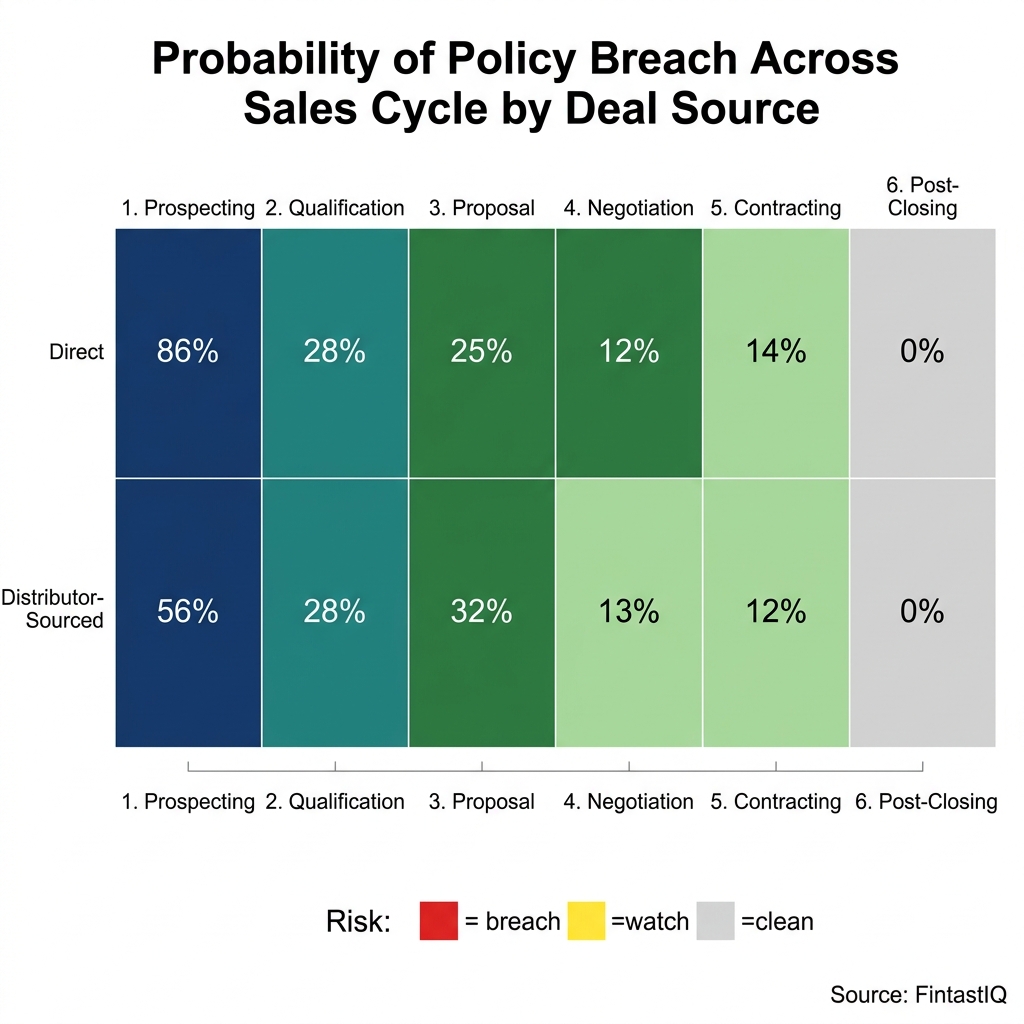

Exhibit: Discount-signal heatmap by deal stage

Exhibit: Discount-signal heatmap by deal stage

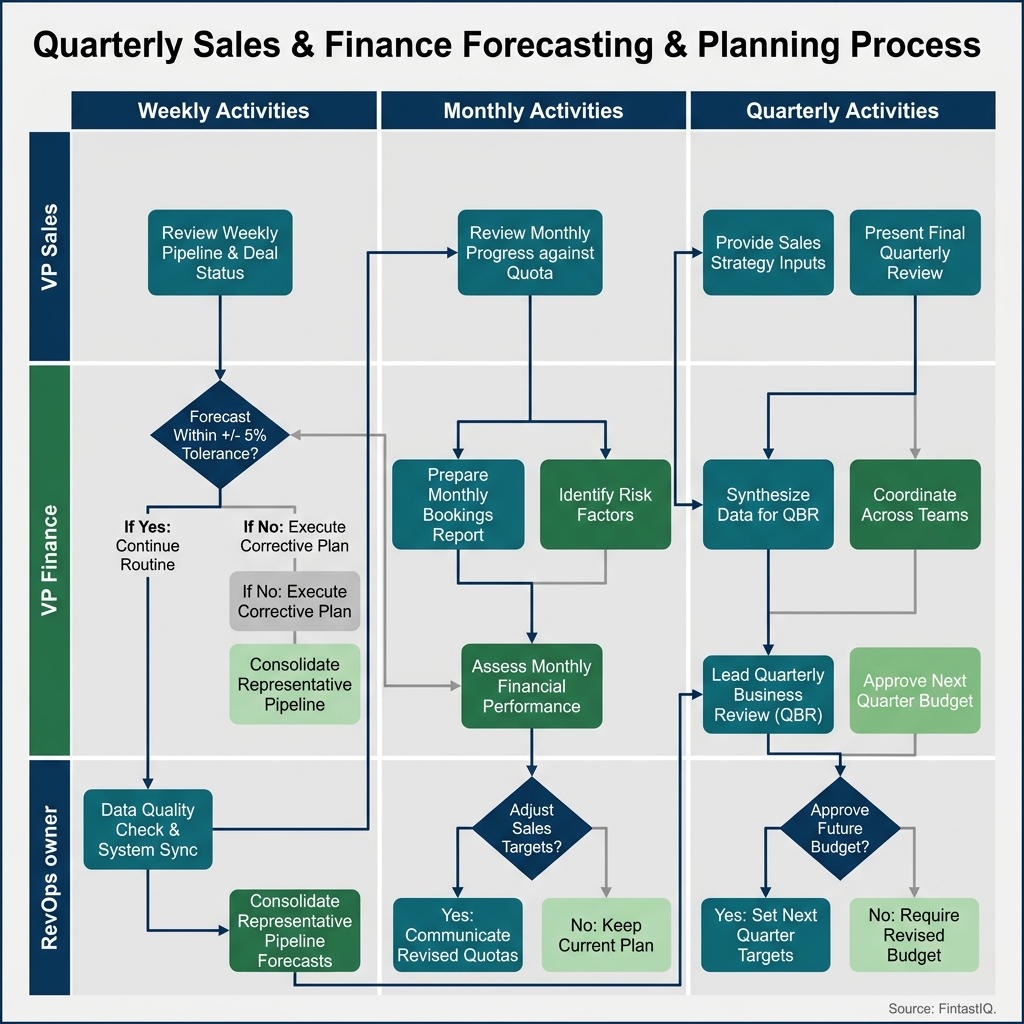

Exhibit: Governance-rhythm swim lane

Exhibit: Governance-rhythm swim lane

Exhibit: Discount-leakage waterfall by channel

Exhibit: Discount-leakage waterfall by channel

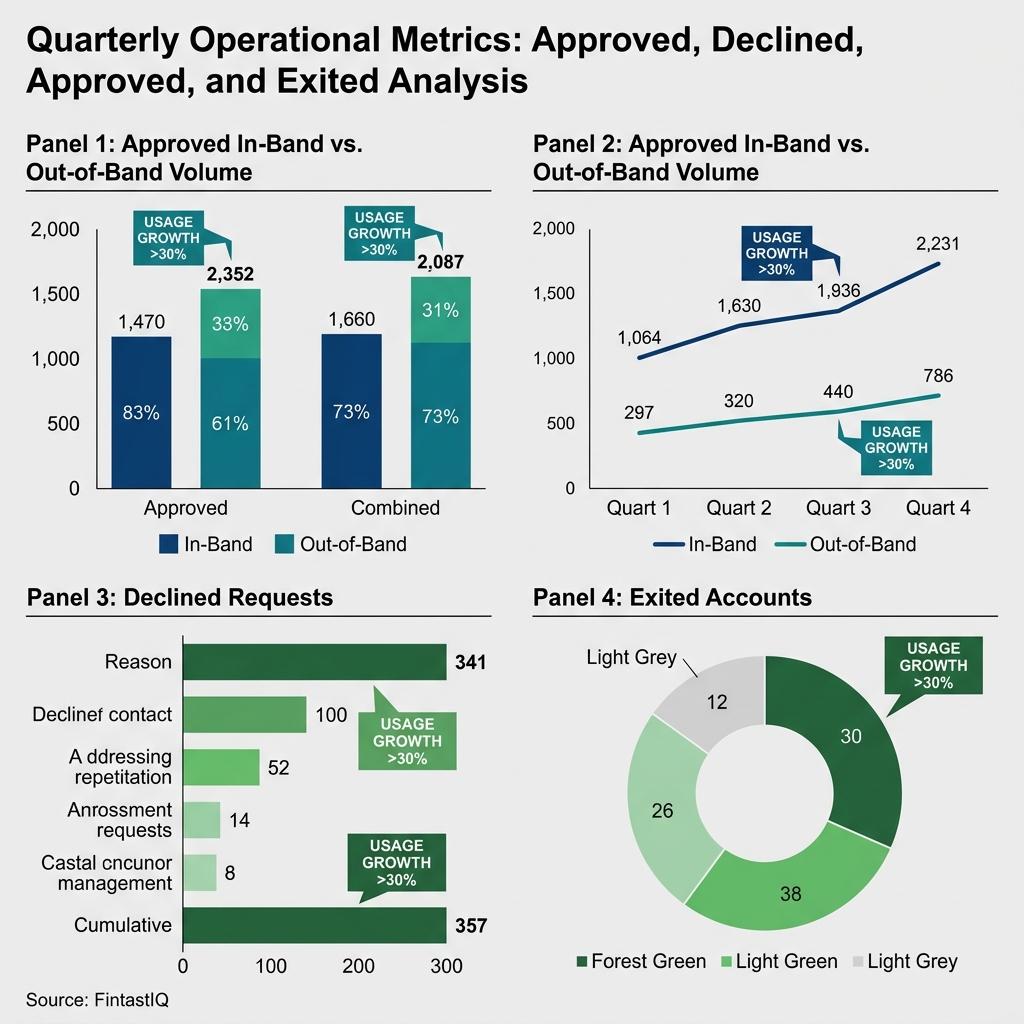

Exhibit: Band-enforcement scorecard, quarterly

Exhibit: Band-enforcement scorecard, quarterly

The core problem, with a name on it

Ironwake Instruments is a 130-person industrial IoT company running at about $56M in revenue, with a 44/56 split between sensor hardware and recurring telemetry services. They have 6,200 deployed sensors across 340 industrial sites, three regional distributors, and a direct-sales motion that chases deals in the $18K-$480K range on a 14-month median sales cycle. On paper, they are a textbook case for disciplined commercial execution. In practice, before they codified their discount policy, 41% of their deals closed outside approved discount bands, 18% of hardware pocket margin was leaking into freight credits and install allowances, and finance estimated $3.8M of revenue lift was lost to rogue concessions over a single fiscal year.

None of that was a failure of intent. The VP Sales had a view on which customers deserved flexibility. The VP Finance had a view on which margins were non-negotiable. Priya, the head of RevOps, had a view on which distributors were gaming the override process. These views did not match, and had never been asked to match in writing, because the team had always closed enough deals to make the question feel academic. Pricing is a signal before it is a number, and Ironwake's signal had quietly become: bring us a plausible reason and we will move.

The temptation, once leadership saw the $3.8M figure, was to buy an AI agent and point it at the pipeline. Wrong sequence. An agent trained on the last two years of Ironwake's deals would have learned the leakage as policy. The rogue concessions were not outliers. They were the median. Discounting is usually a symptom, and the symptom here was a policy that existed in three heads and zero documents. The first six weeks were not about AI. They were about writing the policy down in a form a human could enforce, so an agent could later enforce it at scale.

(a) Policy codification before AI

Ironwake's team produced four discount-policy codifications before the agent was scoped. Each one was a short document, no longer than two pages, written so a new rep could read it on their first day and know what they could and could not offer without approval.

The hardware tier codification named four sensor SKU families and set approved discount bands by volume and contract length, with pocket-margin floors that included freight, install credits, and spare-parts allowances as concession categories. Before this document existed, a rep could offer a 12% list discount, then layer a 4% freight credit and a 6% install allowance, booking an 18% on-paper discount but a 26% pocket concession. The codification closed that loophole by defining the concession envelope, not the line-item discount alone.

The recurring tier codification covered telemetry subscriptions and named the ramp shapes the company was willing to underwrite. Year-one discounts were permitted against a multi-year commit with a mechanical step-up. Standalone year-one discounts were not. Until it was written down, the distinction was invisible to reps who had learned to close by bending year one and promising the difference would come back later.

The distributor override codification was the most contested document and the most valuable. It created a separate band for deals sourced through the three regional distributors, acknowledging that distributors carried inventory risk and competed against regional players Ironwake never saw directly. It also created a named approver and a 48-hour response SLA, so distributors knew exactly where overrides went and how fast they moved.

The multi-year codification addressed the compounding problem. A three-year deal with a year-one discount, a multi-year commit rebate, and a services credit could, under the old regime, accumulate concessions that nobody individually approved but everyone individually signed. The codification required the fully loaded concession value across all years to be surfaced as a single number before any approval was granted. Packaging beats pricing, and this document forced the team to see the package.

None of these four documents were written by the AI agent. They were written by humans, reviewed by the council, and published to the sales team before the agent existed. The agent's job, once it arrived, was to read a deal, classify it against the four surfaces, and enforce what was already written.

(b) Signal detection and scoring

With the policy codified, Ironwake's agent was scoped to do one job well before it did anything ambitious: score every deal in the pipeline against the four policy surfaces and flag the ones that breached. The scoring ran on structured deal data from the CRM and quote data from CPQ, and it produced three outputs for every deal: a classification (hardware, recurring, distributor, multi-year, or a blend), a concession score that included pocket-margin categories, and a confidence interval on the classification itself.

The confidence interval mattered more than the score. A deal that the agent classified as a direct hardware deal with 0.94 confidence could be routed against the hardware band automatically. A deal classified as a distributor-blend at 0.61 confidence was flagged for human classification before any band was applied. Confusion is the enemy of willingness to pay, and an agent that hides its uncertainty inherits that confusion and passes it downstream as false precision.

The signal layer also watched for patterns across deals. Three reps quoting 14% freight credits in the same week on unrelated deals is a signal that a competitive dynamic has shifted, not that three reps independently got generous. The agent surfaced those clusters weekly with no recommendation attached. The council decided whether the pattern deserved a policy change, a competitive analysis, or nothing at all.

Pocket-margin scoring was the hardest part to get right. The first version treated freight credits, install allowances, and spare-parts allowances as fixed percentages of list. After two weeks of production data, the team learned install allowances varied by site complexity and treating them as fixed created systematic false positives on large multi-site deals. Priya and the council rewrote the install-allowance section of the hardware codification, tightened the agent's scoring rules, and accepted that the policy itself had been wrong, not the agent.

(c) Approval gates and escalation lanes

Ironwake's agent enforced seven approval gates, each tied to a specific policy surface and a named human approver. The gates were designed to cover the concession surface, not the dollar surface. A $22K deal with a 31% pocket concession routed to finance. A $410K deal with a 6% pocket concession routed for notification only. Deal size was a secondary signal. Concession depth was primary.

The seven gates covered: in-band hardware (auto-approve with logging), out-of-band hardware within 5 points (VP Sales), out-of-band hardware beyond 5 points (VP Sales plus VP Finance), in-band recurring (auto-approve), multi-year concession stacking beyond a defined envelope (council), distributor override within the distributor band (named channel approver, 48-hour SLA), and distributor override outside the distributor band (council).

The distributor lane was the gate that saved the rollout. In quarter two, the agent produced six false-positive blocks on strategic deals sourced through the largest distributor. These were deals the council would have approved if asked, but the agent had no lane to ask. The distributor-escalation lane was added as a formal eighth gate and given its own SLA. The false-positive rate fell. The best operators compete on discipline, not instinct, and discipline includes designing the lane before the deal needs it, not after.

Every gate had three properties: a named human, a written SLA, and a documented appeal path. Deals that cleared a gate were logged. Deals that were rejected and appealed were logged with the appeal outcome. Three rejected exceptions were appealed successfully in the first 90 days, which the council treated as a positive signal. The policy had real edges, and reps were willing to test them through a formal process rather than work around them through an informal one.

(d) Governance rhythm and council design

The council was three people: VP Sales, VP Finance, and Priya. No rotating seats. No deputies. No proxies. The three of them met weekly for the first quarter, biweekly for the second, and settled into a monthly cadence by month six. The agenda never changed: appealed exceptions, false positives, policy-drift signals from the agent, and one policy surface per meeting reviewed against the prior month's data.

Governance decays. A policy written in January and enforced by an agent in February is a policy under pressure by April, when the first quarter-end push meets the first strategic deal that falls 80 basis points outside the band. The council's job was to notice the pressure, decide whether the policy needed to change or the deal needed to be declined, and produce a written answer. Pricing maturity is measured by what you stop doing, and the council's most valuable output was the list of exceptions it stopped granting after the second time.

Priya owned the agenda, the minutes, and direct access to the agent's logs, so she could pre-flag patterns and walk in with a short list rather than a long debate. The VP Sales argued for deal velocity. The VP Finance argued for margin integrity. Priya argued for policy coherence. The three-way tension was the point. A council with two aligned members and one outlier becomes a rubber stamp. Three distinct interests produce policy that survives contact with the pipeline.

Three failure modes to avoid

Agent-as-policy-author. The most common failure is letting the agent infer policy from historical deals rather than enforce policy humans wrote. This looks like progress because the model fits the data. It is regress because the data encodes the leakage. If your first question to a vendor is "can the agent learn our discount policy from CRM history," stop. The right first question is "can the agent enforce a policy we hand it in plain language, and tell us when we hand it something contradictory."

Block-everything-trap. The second failure is configuring the agent so conservatively that every deal with any signal of risk routes for approval. This produces clean metrics for two weeks, a revolt from sales in week three, and a quiet program-wide override in week four. The cure is to calibrate the auto-approve band generously for in-policy deals and reserve human review for genuine concession depth. Most deals should never see a human approver. The ones that do should deserve the attention.

Exception-lane-atrophy. The third failure is slower and harder to see. Appeal paths exist on paper but nobody appeals, because the first three appellants were treated as troublemakers or the council turned around appeals in four weeks. Over time, reps stop bringing you the deals that test the policy, and you lose the signal you need to know whether the policy is still right. Measure appeal volume monthly. If it drops to zero, something is wrong with the appeal process, not with the deals.

30-60-90 day sprint

Days 1-30. Write the four policy codifications. Do not touch the agent. Stand up the three-person council. Hold two dry-run sessions where the council reviews the last 30 days of closed deals against the new policy and records which ones would have routed where. Publish the policies to sales with a one-page summary and a 30-minute walkthrough. Announce the council, the SLAs, and the appeal path.

Days 31-60. Scope and deploy the agent as a scoring and classification layer only. No gates yet. Run it shadow-mode against the live pipeline, so the agent's classifications and scores are logged but no deals are routed or blocked. The council reviews the shadow output weekly. Fix the pocket-margin scoring. Fix the classification confidence thresholds. Tune until the shadow output matches what the council would have done by hand, at least 85% of the time.

Days 61-90. Turn on the seven gates. Add the distributor-escalation lane when the first false-positive cluster appears, which will happen around day 75. Publish the first monthly governance readout: off-band rate, pocket-margin recovery, appeal volume, false-positive rate, and one policy-change recommendation from the council. At Ironwake, the 90-day readout showed a 72% reduction in off-band discounts, 11% pocket-margin recovery on hardware, and three rejected exceptions that were appealed successfully, against an agent-operations cost of about $3,400 per month plus 0.3 FTE in governance maintenance.

Frequently asked questions

Why start with policy codification instead of the AI agent? An AI agent inherits whatever policy you hand it. If the rules live in a VP's head, a Slack thread, and a 2019 deck, the agent will encode all three inconsistently and call the result governance. Codification is the cheap work that makes the expensive work honest. Spend four to six weeks writing the discount bands, the exceptions, and the escalation paths in plain language first. The agent becomes an enforcement layer, not a policy author, and your reviewers can audit what it decided.

How is this different from a standard CPQ approval workflow? CPQ enforces rules at quote time. A governance agent does three things CPQ usually does not: it scores deal signals before the quote is built, it learns from appealed exceptions so the policy evolves, and it routes escalations to the right human by deal shape rather than by dollar amount alone. CPQ is the gate. The agent is the inspector, the pattern-watcher, and the feedback loop that keeps the gate calibrated.

What if our sales team revolts against new discount gates? They will, briefly, if the gates are introduced as a trust signal. They usually do not if the gates are introduced as a speed signal. Frame every gate as a promise: cleared requests get a binding answer in hours, not days. Publish the approval SLA. Show reps the appeal path. Most reps are not fighting for bigger discounts, they are fighting for faster decisions. Give them the faster decision and the band-width fight shrinks.

How do we handle distributor or channel overrides? Treat distributor overrides as their own policy surface, not a special case of direct-sales discounting. The economics differ: distributors absorb inventory risk, carry installer relationships, and often price against regional competitors you never see. Codify a distributor band, a distributor-escalation lane, and a named human who owns channel exceptions. The agent should know it is looking at a channel deal and apply the channel policy, not the direct policy with a waiver stapled on.

What's the right cadence for a discount council? Weekly for the first quarter, biweekly once the exception rate stabilizes, monthly once the policy is durable. Three people is the right number: a VP Sales, a VP Finance, and a RevOps owner who holds the agenda. More than three and the meeting becomes political. Fewer and you lose the friction that surfaces bad policy. The council reviews appealed exceptions, false positives, and any deal the agent routed to a human in the prior window.

How do you know the agent is calibrated correctly? Two signals. First, your false-positive rate on strategic deals should be low but not zero. Zero means the agent is rubber-stamping. Second, some rejected exceptions should be successfully appealed. If nothing is ever appealed, reps have stopped trying, which usually means they have stopped bringing you the deals that test the policy. Healthy governance has visible edges. You want the edges to be contested occasionally, not constantly.

Does this work for hardware plus recurring-revenue blends? Yes, and the blend is usually where the leakage hides. Hardware discounts compound through pocket-margin erosion: freight, install credits, spare-parts allowances, and warranty extensions that never appear in the quote line. Recurring-revenue discounts compound through multi-year ramps and renewal carryover. Codify both surfaces separately. The agent should score a blended deal twice, against the hardware policy and the recurring policy, then surface the combined concession value before it routes for approval.

What should we not automate, even with a mature agent? Three things. First, the decision to open a new discount band, which is a packaging question, not a pricing question. Second, the decision to override the agent on a strategic account, which should always require a named human with authority. Third, the decision to retire or reshape the policy itself. An agent that can rewrite its own rules is an agent that has quietly become the policy author. Keep humans on the policy surface. Let the agent own enforcement, scoring, and routing.

Run the free assessment or book a consultation to apply this framework to your specific situation.

Questions, answered

4 QuestionsWhy codify discount policy before deploying an AI governance agent?

An AI agent inherits whatever policy you hand it. If the rules live in a VP's head, a Slack thread, and a 2019 deck, the agent will encode all three inconsistently and call the result governance. Codification is the cheap work that makes the expensive work honest. Spend four to six weeks writing the discount bands, the exceptions, and the escalation paths in plain language first. The agent becomes an enforcement layer, not a policy author.

How is an AI discount governance agent different from a standard CPQ approval workflow?

CPQ enforces rules at quote time. A governance agent does three things CPQ usually does not: it scores deal signals before the quote is built, it learns from appealed exceptions so the policy evolves, and it routes escalations to the right human by deal shape rather than by dollar amount alone. CPQ is the gate. The agent is the inspector, the pattern-watcher, and the feedback loop that keeps the gate calibrated.

How do you introduce new discount approval gates without a sales-team revolt?

They will, briefly, if the gates are introduced as a trust signal. They usually do not if the gates are introduced as a speed signal. Frame every gate as a promise: cleared requests get a binding answer in hours, not days. Publish the approval SLA. Show reps the appeal path. Most reps are not fighting for bigger discounts, they are fighting for faster decisions.

Does AI discount governance work for hardware-plus-recurring-revenue blended businesses?

Yes, and the blend is usually where the leakage hides. Hardware discounts compound through pocket-margin erosion: freight, install credits, spare-parts allowances, and warranty extensions that never appear in the quote line. Codify both the hardware policy and the recurring policy separately. The agent should score a blended deal twice and surface the combined concession value before routing for approval.

Most discount leakage is not a willpower failure. It is a policy that was never written down, then asked to scale. This paper walks through what to codify before an AI agent touches a single deal, using a composite industrial-IoT case to show the sequence.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- Michael Marn, Eric Roegner & Craig Zawada. The Price Advantage. Wiley, 2004

- Reed Holden & Mark Burton. Pricing with Confidence. Wiley, 2008

- Thomas Nagle & Georg Müller. The Strategy and Tactics of Pricing. Routledge, 2016

- Marco Bertini & Luc Wathieu. How to Stop Customers from Fixating on Price. Harvard Business Review, 2010

- Michael V. Marn & Robert L. Rosiello. Managing Price, Gaining Profit. Harvard Business Review, 1992