From +/-28% to +/-9% Forecast Variance in 90 Days

Pipeline hygiene stops being a spreadsheet exercise the moment you codify the signals that actually predict commit slippage. This paper walks through the seven signals, the weekly rhythm, and the forecast math that follows. The commercial archetype is Harness & Halyard Systems, a maritime navigation hardware and subscription charts business with a dealer network.

The AI Pipeline Hygiene Agent: How Commit-Risk Signals Replace Forecast Theater

Open your commit list. Then open last quarter's landed ARR. If the gap is above ten percent, the list is not a forecast. It is a story. This paper is about replacing the story with a rhythm.

Most revenue orgs we meet have a hygiene problem dressed up as a forecasting problem. Three stage definitions. Two competing CRMs. A commit list nobody audits before Friday afternoon. An agent layered on top of that will not help. Codification has to come first.

TL;DR.

- Codify the signals before you introduce the agent. Seven commit-risk signals. One stage ladder. One definition of champion, next step, and procurement engagement.

- Cap the commit lane at 20 deals. Rank by exposure. Review weekly in a Thursday deal council with the manager, the rep, and the agent's shortlist on the table.

- Replace the Monday forecast-theater call with the Thursday rhythm. Let the agent observe behavior. Let the council decide. Let the rep own the call.

- Measure the work in tightened variance, not in adoption metrics. Harness & Halyard moved from plus or minus 28 percent committed-to-landed variance to plus or minus 9 percent in 90 days. That is the number that matters.

- The best operators compete on discipline, not instinct. This is the operating discipline for pipeline.

The archetype: Harness & Halyard Systems

Harness & Halyard Systems builds maritime navigation hardware and sells subscription electronic charts alongside it. 150 people. $62 million in revenue. 380 commercial fleets on direct contracts. 2,100 yacht customers served through a network of 48 marine dealers. The revenue mix runs 38 percent hardware, 62 percent recurring subscription. Deal sizes range from $8,000 to $240,000 on direct, $2,000 to $40,000 through dealers. Sales cycles are 9 months direct, 45 days dealer-mediated.

Teodor runs revenue. When he stepped into the role, committed-to-landed ARR variance ran plus or minus 28 percent quarterly. Fifty-two percent of deals the team called best case slipped. Three sales managers ran three different stage definitions. The Monday forecast call consumed two hours and produced a number nobody trusted by Tuesday.

The fix was not a new tool. It was codification, a seven-signal rubric, a weekly rhythm, and an agent scoped narrowly to observation. Ninety days later, forecast variance sat at plus or minus 9 percent. Three stage definitions consolidated to one. Four deals pulled from commit with cause, not judgment. Two deals pulled in early from upside. The Monday call was gone.

The archetype matters because the shape of the problem is shared across software, hardware plus subscription, marketplaces, B2B2B distribution, and consumer durables. The motion differs. The hygiene discipline does not.

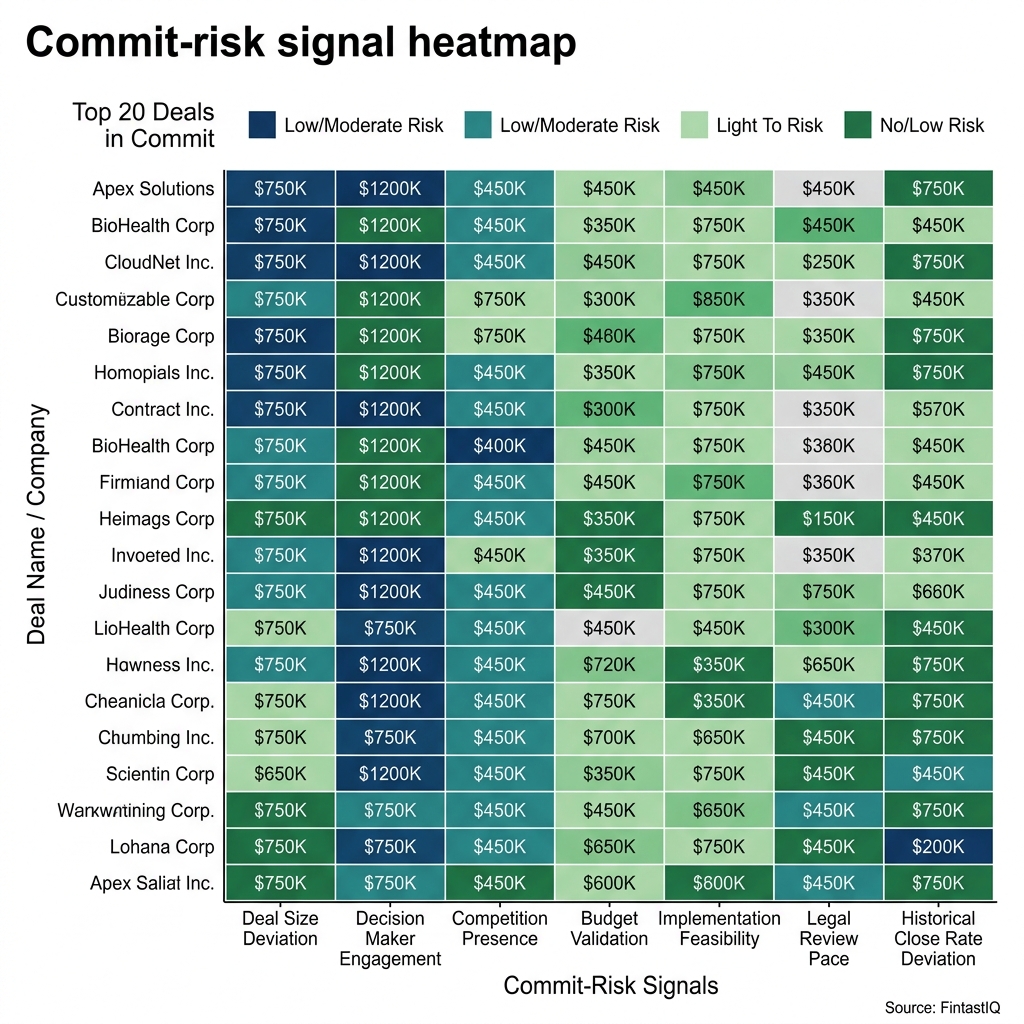

Exhibit: Commit-risk signal heatmap

Exhibit: Commit-risk signal heatmap

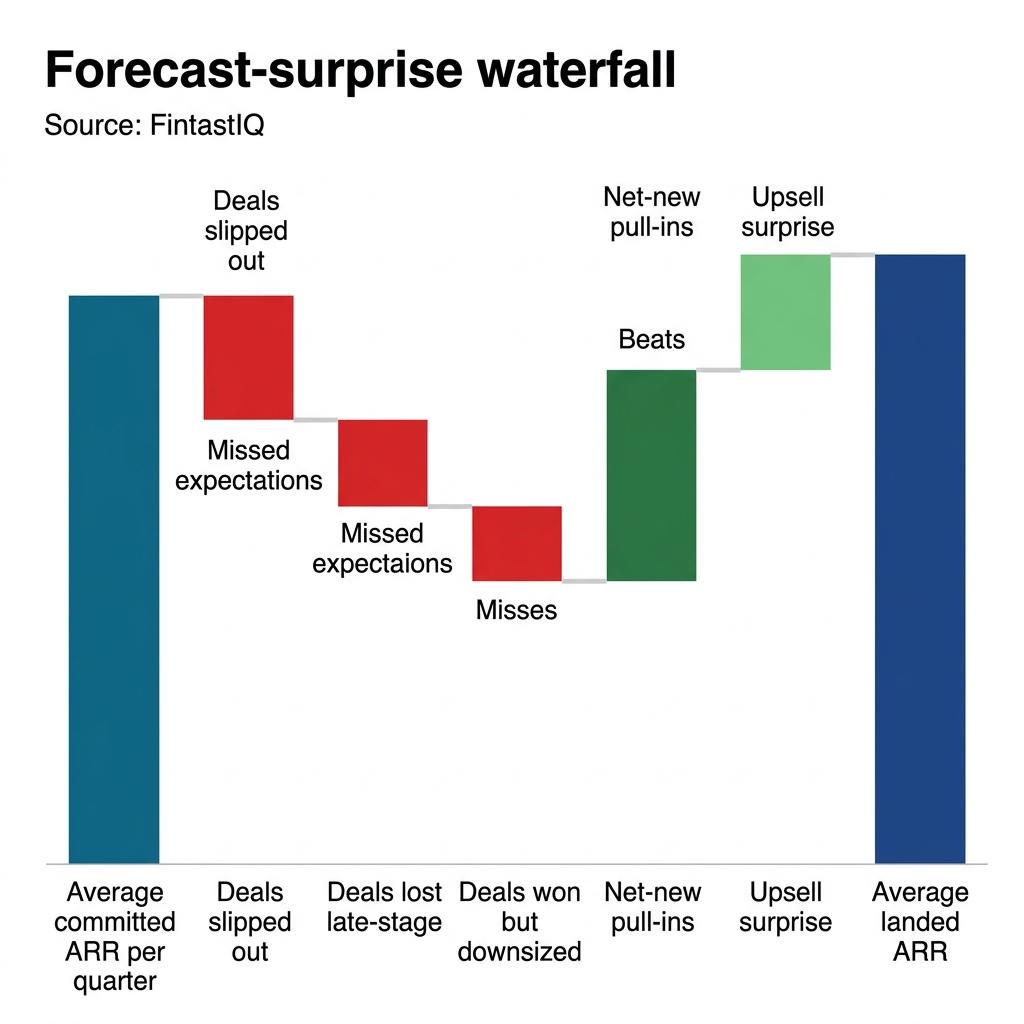

Exhibit: Forecast-surprise waterfall

Exhibit: Forecast-surprise waterfall

The four-part framework

Part one: Signal codification before AI

An agent that audits chaos ships chaotic memos. Before any model runs against the pipeline, the team has to agree on what a signal is. Pricing is a signal before it is a number, and pipeline health is the same. The signal comes first. The model comes second.

Teodor started with a half-day working session. Himself, the three sales managers, the revops lead, and two senior reps from the direct and dealer motions. Agenda: one page. What do we mean by stage three. What is a champion. What counts as a next step. What does procurement silence look like in a dealer motion versus a direct motion.

By the end of the session there was one stage ladder, written plainly. Five stages for direct. Four for dealer-mediated. Cross-walked so the forecast math would roll up. One definition of champion: named individual, responded to rep outreach in the last 21 days, mentioned in at least one logged meeting with a buyer from a different function. One definition of next step: a calendar event, owner named, outcome specified. One definition of procurement engagement: named procurement contact, with a logged interaction in the last 14 days when the deal is past stage three.

This is boring work. It is also the work. The agent that runs next week is only as useful as the definitions agreed to this week.

Part two: The seven commit-risk signals

After codification, the rubric. Seven signals. Each defined as observable behavior, not rep self-report.

1. Stage-age anomaly. The deal has been in its current stage for more than 1.5 times the segment median duration. For direct fleet deals, segment median stage durations come from 24 months of closed-won data. For dealer-mediated deals, the baseline runs shorter. A yacht deal sitting in stage three for 30 days is a red flag. A fleet deal sitting in stage three for 90 days is within norm. The signal is relative, not absolute.

2. No next step. The deal has no calendar-backed next step with an owner and an outcome. A note saying "follow up with Sven" is not a next step. A calendar event titled "Sven review of chart subscription ROI with fleet ops" is. Reps game single-field checks. Multi-signal checks raise the gaming cost above the effort of doing the work properly.

3. Champion silence. The named champion has not responded to rep outreach in 21 days. For dealer-mediated deals, this drops to 10 days given the shorter cycle. Silence is not always a lost deal. It is always a deal that needs a second thread.

4. Multi-thread loss. The rep has only one active contact in the buying organization, or has lost access to a previously engaged contact. In fleet sales this signal is particularly sharp. A deal with one champion and no second thread is one reorg away from dead.

5. Procurement silence. The deal is past stage three and no procurement contact has engaged in the last 14 days. On dealer-mediated deals this adapts to the dealer principal's procurement equivalent. Silence here usually means the internal authorization work has not started, and the close date is a guess.

6. Redlines stalled. Contract redlines have sat without movement for more than 10 business days. This signal is only live after redlines have begun. Before that it is not applicable and the heatmap shows gray.

7. Price objection unresolved. A logged price objection exists and has not been answered with a specific response in the same medium. An email objection requires an email response with a specific concession, trade, or hold. An objection in a meeting requires a follow-up artifact within 48 hours. Confusion is the enemy of willingness to pay, and an unresolved price objection is confusion compounding. For Harness & Halyard, this signal fires on both hardware concessions and subscription discount requests, and it fires hardest when the two get tangled together in the same thread.

Each signal is read-only. The agent observes. It does not score MEDDICC. It does not make the forecast call. It flags behavior that historical closed-opportunity data says predicts slippage. A deal with zero red cells on the heatmap is not a guaranteed win. It is a deal that has cleared the observable checks. A deal with three or more red cells is not guaranteed to slip. It is a deal that the council has to look at before the quarter closes, because past patterns say the slip probability runs roughly three times the clean baseline.

The signals also compose. A champion silence signal alone is recoverable. A champion silence signal stacked with a procurement silence signal and a redlines-stalled signal is a deal that almost always slips, and the council should be pulling it, not defending it.

Part three: Deal-review rhythm and artifacts

The signals are useless without a rhythm that turns them into decisions.

The commit lane. Twenty deals. Not per rep. Total. Ranked by dollar-weighted exposure across direct and dealer motions. The cap is the discipline. If a fourth deal needs to enter the commit lane, something leaves. The agent ranks and proposes the lane. The manager confirms.

The weekly deal council. Thursday afternoon. One hour. Teodor chairs. The three sales managers attend. Reps owning deals on the shortlist join for their deals only. The agent's output sets the agenda. The heatmap is visible. The seven signals are the vocabulary.

The council has three outputs per deal. Stay in commit with specific evidence. Pull with cause. Pull in from upside with specific evidence. No fourth option. The evidence is named. The decision is logged. The next review is calendared.

The artifacts. Three documents. The commit lane ranking, published Thursday morning. The council decisions log, updated Thursday afternoon. The quarterly rubric review, run in the last week of each quarter, where the team looks at what the signals predicted versus what landed, and tunes the thresholds.

Teodor replaced the Monday forecast-theater call with this Thursday rhythm. Monday tends to amplify optimism. Thursday has the week's activity behind it and the weekend in front of it. Reps prepare differently. Managers ask different questions. The rhythm changed the conversation.

A second artifact earned its keep quickly: the pulled-deal memo. Any deal pulled from commit gets a short written note naming the signal stack that triggered the pull and the expected path back, if any. The memo takes the rep five minutes. It protects the rep from re-litigating the pull in the next council, and it protects the manager from carrying pulled deals back into commit on feel. The memo is also the artifact Teodor uses to coach. Patterns in pulled-deal memos surface coaching themes that the weekly council tends to miss.

Part four: Forecast math after hygiene

Once the rubric and the rhythm are working, the forecast math simplifies. Landed ARR for a quarter equals the sum of commit-lane deals that survived the council, plus a historical conversion rate on the best-case lane, plus a small allowance for upside pull-ins.

At Harness & Halyard the conversion rates stabilized inside two quarters. Commit-lane deals that survived council at week minus four of close landed at 88 percent. Best-case deals historically converted at 32 percent, and the range tightened to 28 to 36 percent once the rubric was in place. Upside pull-ins ran at 6 percent of quarterly bookings, within a 2-point band.

The math is boring because the hygiene is disciplined. That is the point. A forecast that feels exciting is usually a forecast that is about to be wrong. A forecast that feels dull is usually a forecast that will land. Pricing maturity is measured by what you stop doing, and forecast maturity is measured the same way. Teodor stopped doing the Monday call. He stopped tolerating three stage definitions. He stopped carrying deals without evidence. The variance followed.

Three failure modes

Signal inflation. The team starts with seven signals and decides nine would be better, then eleven, then a custom signal per vertical. By quarter two, the heatmap is illegible and the council agenda runs to 40 deals. Fix: cap the rubric at seven. Any new signal has to replace an existing one, with closed-opportunity evidence that the new one predicts better than the one it replaces.

Rhythm theater. The Thursday council happens, but the manager uses it to validate the rep's existing call rather than challenge it. Decisions get logged as "stay in commit" without named evidence. The rhythm looks healthy and the variance does not move. Fix: the manager owns challenging every stay-in-commit decision. The revops lead audits the decisions log monthly. Decisions without named evidence get flagged and revisited.

Manager override everywhere. Managers start overriding the agent on more than 40 percent of flags, citing context the agent cannot see. Sometimes this is legitimate. Often it is manager-by-vibe creeping back in. Fix: override is a logged decision with named evidence, not a verbal wave. If override rate runs above 30 percent for two quarters, the rubric gets tuned, not ignored. The agent gets better when the override pattern is studied.

30-60-90 sprint

Days 1 to 30. Codify. Stage ladder consolidated. Seven signals defined in writing. Closed-opportunity data pulled for 24 months and segmented by direct and dealer motion. Commit lane cap set at 20 deals. The Thursday council is on the calendar. No agent yet.

Days 31 to 60. Pilot. Agent deployed read-only against the rubric. Output goes to Teodor, the three managers, and revops only. Reps do not see the agent output directly. The council runs the agent shortlist. Calibration sessions weekly. False-positive rate tracked. Rubric thresholds tuned.

Days 61 to 90. Operate. Agent output visible to reps on their own deals. Decisions log public to the commercial team. Forecast math tightens. Commit-to-landed variance measured and reported. Quarterly rubric review scheduled for the end of quarter.

At day 90 at Harness & Halyard, variance sat at plus or minus 9 percent. Four deals had been pulled from commit with cause. Two had been pulled in early from upside. Teodor ran a 45-minute Thursday council in place of a two-hour Monday theater. Three stage definitions had become one. The cost to run the agent and the rhythm was $2,100 per month plus 0.25 FTE in revops.

Frequently asked questions

How do we decide which signal to drop when we want to add a new one? Pull the closed-opportunity data for the last two quarters. Score each of the seven signals by predictive lift against actual slippage. The weakest signal gets replaced by the candidate, and only if the candidate scores better on the same data. No signal gets added on intuition.

What if our CRM data is too dirty to trust any signal? Then codification is the first 60 days, not the first 14. Run the rubric manually against a 20-deal sample for four weeks. Find the data gaps. Fix them. The agent does not launch against dirty data. A hygiene agent on messy inputs produces confident nonsense at weekly cadence.

How should the dealer motion differ from the direct motion? Thresholds, not definitions. Champion silence at 10 days for dealer, 21 days for direct. Stage-age anomaly indexed to shorter segment medians. Procurement silence adapts to the dealer principal's equivalent. The definitions hold. The numbers move.

Who owns the decisions log? The revops lead. The manager makes the call. The rep provides the evidence. The revops lead records and audits. If the log drifts toward "stay in commit, no evidence," the audit surfaces it to the head of revenue.

How do we handle a deal we know is real but the signals say is red? That is what the council is for. Evidence the agent cannot see gets named and logged. If the pattern repeats, the rubric learns from it. One override is context. Twenty overrides is a rubric defect.

Run the free assessment or book a consultation to apply this framework to your specific situation.

Questions, answered

8 QuestionsWhat does an AI pipeline hygiene agent actually do inside the CRM each week?

It runs a scheduled read-only audit of the CRM every week, evaluates each open opportunity against a codified list of commit-risk signals, and produces a shortlist of deals that need review. The agent does not write back to the CRM and does not message reps directly. Its output feeds a weekly deal council where managers and reps work through the shortlist, confirm which deals stay in commit, and which get pulled. The goal is discipline, not automation of judgment.

Why codify commit-risk signals before introducing any pipeline AI?

If three managers define stages three different ways, the agent will average the confusion. Confusion is the enemy of willingness to pay, and it is also the enemy of a trustworthy forecast. Codification means one stage ladder, one definition of champion, one definition of next step, one definition of procurement engagement. Only then does an agent have something stable to audit against. Skip this step and you automate noise at weekly cadence.

Does pipeline hygiene work for hardware-plus-subscription businesses with dealer networks?

Yes. Harness & Halyard sells navigation hardware alongside subscription electronic charts, split 38 percent hardware to 62 percent recurring. The signals adapt cleanly. Stage-age anomaly applies to a 9-month direct fleet sale and a 45-day dealer-mediated sale. Champion silence applies whether the champion is a fleet operations lead or a marine dealer principal. The thresholds differ by motion. The signal definitions do not.

How is signal-based pipeline hygiene different from MEDDICC or rep-scored qualification?

Scorecards ask reps to self-report qualification. Signals observe behavior. The rep scoring their own MEDDICC at 80 is a claim. A champion who has not responded in 21 days is evidence. The hygiene agent reads evidence. The deal council converts evidence into a decision. The rep still owns the deal and the forecast call. The discipline is in separating the claim from the evidence so the manager can see both.

How many deals should actually sit in the quarterly commit lane?

Fewer than you think. Harness & Halyard monitors 20 deals in the commit lane each quarter across direct and dealer motions combined. Not 20 per rep, 20 total for the commit review. If your commit list runs to 60 deals, it is a wish list, not a commit list. The discipline of naming the 20 is itself the exercise. The agent enforces the cap by ranking exposure.

Why replace the Monday forecast call with a Thursday deal-review council?

The Monday forecast-theater call gets replaced by a Thursday deal-review rhythm. Monday morning tends to amplify optimism. Thursday afternoon invites honesty, because the weekend is visible and the week's activity has happened. The council runs one hour. The agent output sets the agenda. The manager runs the conversation. The rep either defends the deal with evidence or accepts the pull.

What does it cost to run an AI pipeline hygiene agent at a $60M ARR company?

Harness & Halyard runs the agent for about $2,100 per month in ops cost, with 0.25 FTE in revops for maintenance, rubric tuning, and calibration sessions. That is small relative to the cost of one $240,000 fleet deal slipping a quarter because nobody saw the procurement silence coming. The best operators compete on discipline, not instinct, and this is a discipline budget, not a technology budget.

What forecast habit should a CRO stop doing first to fix variance?

Stop treating the rep-submitted forecast as a forecast. It is an opinion. A forecast is what remains after the seven signals have been applied and the deal council has ruled. Pricing maturity is measured by what you stop doing, and forecast maturity follows the same rule. Stop running the Monday theater. Stop tolerating three stage definitions. Stop carrying deals in commit that have no evidence of buyer progression. The signals tell you which habits to drop.

Pipeline hygiene stops being a spreadsheet exercise the moment you codify the signals that actually predict commit slippage. This paper walks through the seven signals, the weekly rhythm, and the forecast math that follows. The commercial archetype is Harness & Halyard Systems, a maritime navigation hardware and subscription charts business with a dealer network.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- Aaron Ross & Marylou Tyler. Predictable Revenue. PebbleStorm, 2011

- Matthew Dixon & Brent Adamson. The Challenger Sale. Portfolio/Penguin, 2011

- Neil Rackham. SPIN Selling. McGraw-Hill, 1988

- Brent Adamson, Matthew Dixon & Pat Spenner. The End of Solution Sales. Harvard Business Review, 2012

- Aaron Ross & Jason Lemkin. From Impossible to Inevitable. Wiley, 2016