Your $2.1M Deal Sat in Email 11 Days. Agents Cut It to 3.

A two-part agent playbook for the commercial operating model. Part one: a structured-interview diagnostic agent that surfaces where revenue leaks across functional handoffs. Part two: a multi-agent orchestrator that runs the pricing-sales-finance workflow on non-standard deals, with scoped sub-agents, human-in-the-loop gates, and a full audit trail.

The AI Commercial Operating Model Agent: Diagnose the Leakage, Orchestrate the Handoffs

Your $2M enterprise deal sits in email for 11 days. It touches 7 people. Nobody owns the packet. Pricing has one version of the discount. Sales has another. Finance has a revrec flag nobody read. Legal is waiting on terms that pricing has not drafted. The deal slips the quarter.

You call this "how we do enterprise."

TL;DR.

- The commercial operating model is the set of cross-functional handoffs between pricing, sales, finance, marketing, product, and customer success. It is where real money leaks. Not at the price point. At the seams.

- Two agents do the heavy lifting. A diagnostic agent runs structured interviews with your executive team, synthesizes the transcripts, maps the handoffs, and produces a leakage hypothesis. An orchestration agent then runs the hardest workflow end to end, starting with pricing to sales to finance on non-standard deals.

- The failure mode is never the model. It is handoff contracts that are not explicit, policy documents that are out of date, and an orchestrator that averages disagreement instead of surfacing it. This paper ships the prompts, the architecture, the guardrails, and the 90-day rollout.

The two jobs of a commercial operating model AI

Diagnose. Then orchestrate.

Diagnose means you do not know where the leaks are yet. You have a hunch. Your CRO says sales. Your CFO says pricing. Your CPO says marketing qualified leads are garbage. Everyone is half right. A diagnostic agent turns those half-truths into a ranked list of handoffs costing you cycle time and margin.

Orchestrate means you pick one. You build agents that run that handoff, with humans on every material decision, with a full audit log, with scoped tool access. You measure the cycle-time delta. Then you pick the next one.

Most teams skip the diagnostic and build an orchestrator for the wrong workflow. They automate the handoff their loudest exec complained about. Six months later cycle time is the same, because they did not close the leak. They closed a leak.

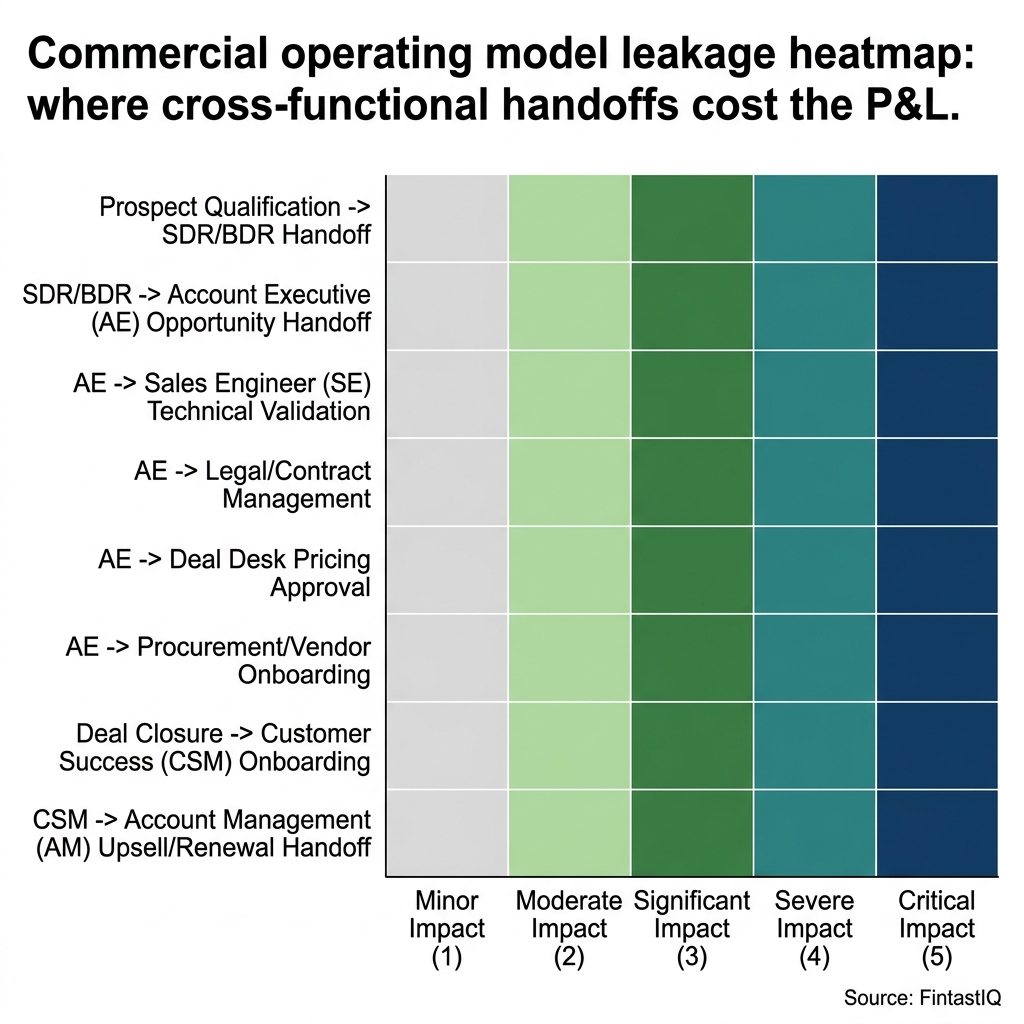

Exhibit: Operating model leakage heatmap

Exhibit: Operating model leakage heatmap

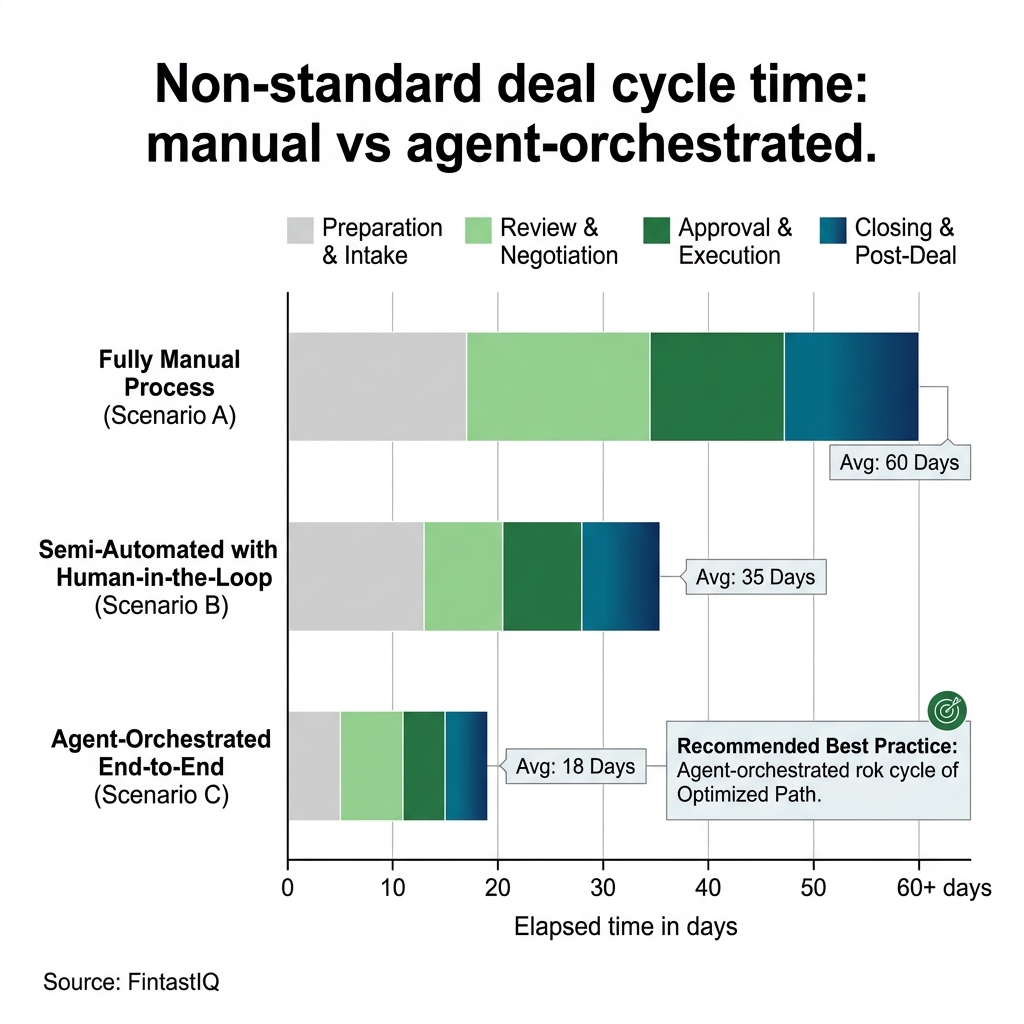

Exhibit: Manual vs orchestrated cycle time

Exhibit: Manual vs orchestrated cycle time

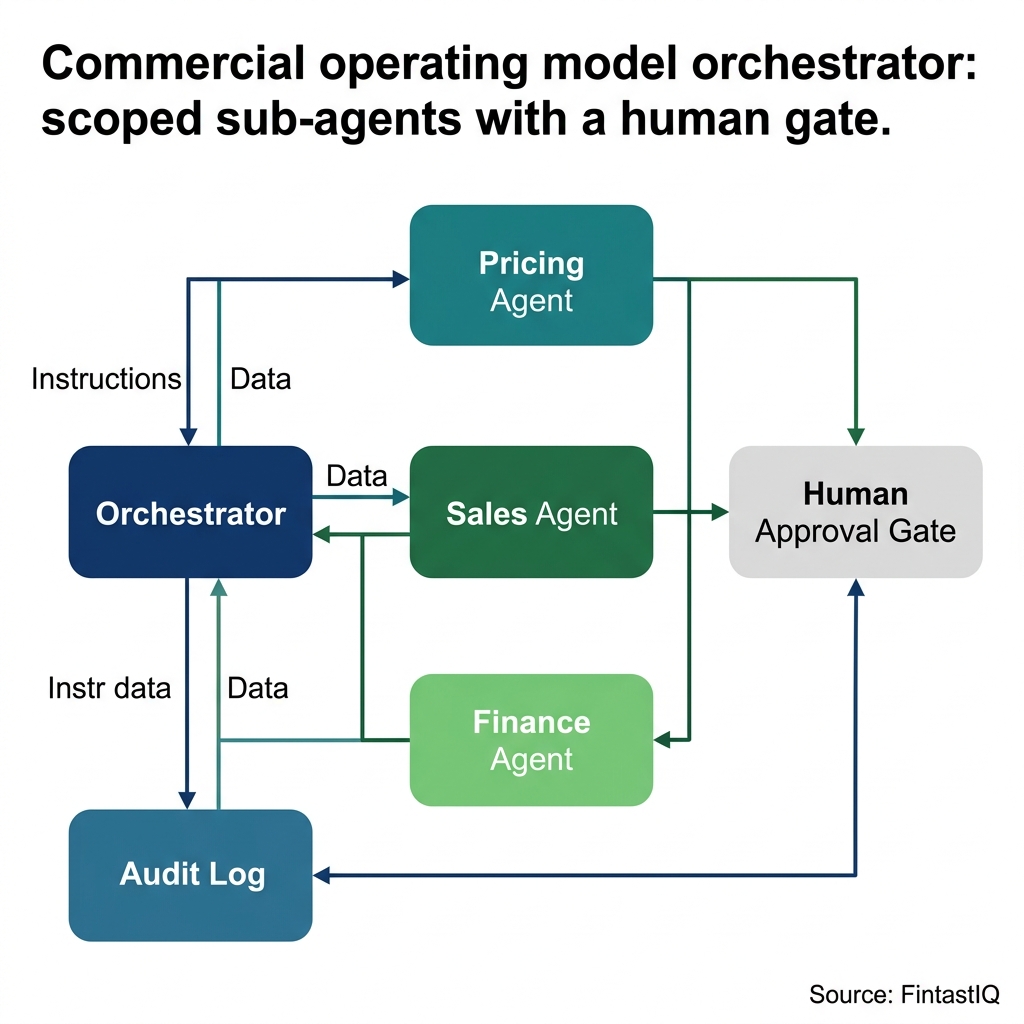

Exhibit: Orchestrator architecture diagram

Exhibit: Orchestrator architecture diagram

PART ONE: The Diagnostic Agent

The diagnostic agent is a structured-interview and synthesis workflow. It does five things.

- Generate role-specific interview guides. For each executive role (CRO, CMO, CPO, CCO, CFO, VP CS), draft a 45-minute interview guide calibrated to that role's view of the operating model.

- Conduct or ingest interviews. Either the agent runs the interview via voice, or a human interviewer runs it and uploads the transcript.

- Synthesize transcripts. Extract stated pains, stated fixes, and quantified costs. Tag each by the handoff it implicates.

- Map handoffs and triangulate contradictions. Where the CRO and the CFO disagree about what breaks at deal approval, that disagreement is the data. Surface it, do not average it.

- Produce a leakage hypothesis and action brief. Ranked list of the top three handoffs, dollar impact estimate, and the recommended first orchestration target.

Meet Waypoint Signals. 180 people. $78M ARR. PE-owned, year 3 of a 5-year hold. CRO says win rates are fine but cycle times have crept from 52 days to 74. CFO says margin per enterprise deal is down 340 basis points. CPO says the new usage meter is landing in Q2. Three half-truths that share one root cause: non-standard deals are breaking the pricing-sales-finance handoff, and the breakage is invisible in any single function's dashboard.

The diagnostic agent does not take sides. It reads all five interview transcripts and finds the seam.

Prompt A: Role-specific interview guide generator

What it does: produces a 45-minute structured interview guide tuned to a specific executive role, with follow-up probes keyed to the commercial operating model.

You are an interview designer building a diagnostic for the commercial operating model at a mid-market B2B company. Produce a 45-minute structured interview guide for the role of {ROLE} (one of: CRO, CMO, CPO, CCO, CFO, VP CS).

Context:

- Company: {COMPANY_NAME}, {HEADCOUNT} people, ${ARR}M ARR, {PE_OR_INDEPENDENT}

- Primary product: {PRODUCT_DESCRIPTION}

- Known pain signal: {SUSPECTED_LEAKAGE}

Guide structure:

1. Opening (5 min): current priorities and the one metric this role owns.

2. Handoff map (15 min): for every adjacent function this role hands off to or receives from, ask where it breaks, how often, and what it costs in time or money. Probe for specific recent examples with dollar amounts.

3. Contradiction probes (10 min): for each handoff pain, ask how the counterpart function would describe the same breakdown. Capture the anticipated disagreement.

4. System constraints (10 min): tools, approval thresholds, reporting lines that shape the handoff.

5. Close (5 min): if you could fix one handoff this quarter, which one, and what would it be worth in dollars or days.

Output format: numbered questions with follow-up probes beneath each. Each probe should push for specifics (names, numbers, recent deals) not generalities.

Where this breaks: if the role is new to the company (less than 90 days), the handoff map will be thin. Add a probe at the end asking what predecessor artifacts (Slack archives, deal review decks, board memos) would fill the gap.

Prompt B: Cross-transcript contradiction triangulator

What it does: reads the full set of role interviews and surfaces where executives describe the same handoff differently. The disagreement is the signal.

You are a cross-functional synthesis analyst reading interview transcripts from a mid-market B2B company's executive team. You have been given transcripts from {LIST_OF_ROLES}.

Task:

1. Build a handoff matrix. Rows: handoffs named in any transcript (e.g., "pricing to sales on non-standard deals," "marketing to sales at MQL handoff," "sales to CS at close-won"). Columns: each role interviewed.

2. For each cell, extract that role's stated view of the handoff. Include a direct quote of 1-3 sentences.

3. Flag cells where two roles describe the same handoff with materially different framing. Do not average them. Quote both.

4. For each flagged contradiction, hypothesize the root cause (technical issue, policy ambiguity, incentive misalignment, data visibility gap).

5. Rank the top three contradictions by estimated dollar impact, using any specific numbers cited in the transcripts.

Output format:

- Handoff matrix (markdown table)

- Contradiction log (per contradiction: roles involved, both quotes, hypothesis, dollar impact estimate, confidence level)

- Top three ranked by impact with recommended first orchestration target

Where this breaks: if one role dominates the transcript corpus (more interview time, more examples), their framing biases the synthesis. Add a balance check: no single role can contribute more than 40 percent of the quotes in the final output.

Run those two prompts against Waypoint Signals. The output: a ranked handoff list. Number one is pricing to sales to finance on non-standard deals, estimated drag of 11 days per deal and roughly 180 basis points of gross margin. That is the workflow the orchestration agent picks up.

PART TWO: The Orchestration Agent

Here is the part that costs you money.

Cross-team workflows fail because different systems, different KPIs, different tempos, different approval thresholds. Sales lives in the CRM. Finance lives in the GL. Pricing lives in a spreadsheet or a committee. Legal lives in redlines. A human can stitch that together on one deal through sheer tenacity. That human does not scale. Your best deal desk analyst closes 40 non-standard deals a quarter before they burn out.

The orchestration agent is the connective tissue. It does not do the judgment. It runs the workflow.

Waypoint Signals: a $2.1M deal, the manual version

A new enterprise prospect at Waypoint Signals wants the platform with three non-standard terms: a 22 percent discount off list, a custom usage meter (per-document instead of per-seat, because the buyer's team works in pooled workflows), and net-90 payment on a three-year prepay. ACV is $2.1M.

The rep drafts the proposal on a Monday. Pricing gets a Slack message. Pricing asks sales for the margin calc. Sales asks finance. Finance asks product whether the per-document meter is even plumbed. Product says it is, but only if the customer is on the new platform build. Pricing drafts the discount rationale. Legal reviews the non-standard payment terms. CFO gets pulled in because net-90 changes the cash curve. Somebody Slack-DMs the CRO to approve.

Eleven days. Twelve email threads. Two Slack channels. Nobody owns the packet. The deal slips the quarter. The buyer, who needed a signature by month-end for their own budget cycle, calls the competitor.

That is not a pricing problem. That is a commercial operating model problem.

Waypoint Signals: the same deal, agent-orchestrated

Same deal lands in the CRM. The orchestrator picks it up because it matches a non-standard trigger (discount above 15 percent, non-standard meter, or non-standard payment terms).

- Pricing Agent parses the deal, checks the discount against the pinned pricing matrix, flags the per-document meter for product review, and drafts a pricing rationale memo. Output: structured JSON plus a 180-word rationale.

- Sales Agent drafts the commercial terms memo for the deal, flags any contract exceptions for legal (in this case, the net-90 clause), and produces the customer-facing term sheet draft. Output: structured terms object plus draft PDF.

- Finance Agent checks revrec implications of the three-year prepay with net-90 payment, flags the non-standard payment schedule for CFO review, and computes the gross margin and cash-flow impact under three sensitivity scenarios. Output: structured finance memo plus three-scenario table.

- Orchestrator holds the workflow state as a growing JSON deal object, routes each sub-agent's output, detects when two sub-agents disagree (pricing says 22 percent is defensible, finance says margin is 340 bp below threshold), escalates on exception, and assembles a single approver-ready packet for the CRO and CFO.

Elapsed time: one to three days with human-in-the-loop at every gate. Roughly four to six hours when the deal sits entirely within pre-calibrated thresholds and partial autonomy is enabled.

Same deal. Same product. Same customers. Your money.

The architecture, plainly

- Orchestrator holds workflow state. A typed JSON deal object that every sub-agent reads and writes back to. Never global variables. Never "whatever the last agent said."

- Scoped tools per sub-agent. The pricing agent can read the discount matrix and write to the pricing rationale field. It cannot touch the GL. The finance agent can read the revrec policy and write to the cash-flow field. It cannot modify the discount. One agent, one scope.

- Human-in-the-loop at defined decision points. Not every step. Sub-agent outputs land in the packet. Humans gate on approval thresholds, on flagged exceptions, and on cross-agent disagreement. Humans do not review what the agent did. They decide what the agent surfaced.

- Full audit log. Prompt version, model version, policy document version for every document read, sub-agent output, orchestrator routing decision, human approver, final verdict. Pinned. Immutable.

Prompt C: Orchestrator router

What it does: receives a deal object, classifies which sub-agents need to run, maintains workflow state, and escalates on exceptions.

You are the orchestrator for a B2B commercial workflow. You receive a deal object in JSON and route it through pricing, sales, and finance sub-agents in the correct sequence. You do not make pricing or finance decisions. You route, hold state, and escalate.

Deal object schema: {DEAL_SCHEMA}

Pinned policy version: {POLICY_VERSION}

Sub-agents available: pricing_agent, sales_agent, finance_agent

Human gate thresholds: {THRESHOLDS}

Rules:

1. Parse the deal object. Classify: standard, non-standard-pricing, non-standard-terms, non-standard-finance, or multi-exception. Return the classification with reasoning.

2. Select sub-agents based on classification. Standard deals route to pricing_agent only. Multi-exception deals route to all three.

3. Invoke each selected sub-agent in sequence. Pass the current deal object state. Receive their structured output and merge it into the state object.

4. After each sub-agent response, check for disagreement with prior sub-agent outputs. If disagreement exceeds 10 percent on any quantitative field (margin, discount, cash impact), flag as CROSS_AGENT_EXCEPTION. Do not resolve it. Surface it.

5. If the deal crosses any human gate threshold, halt and request human approval before proceeding.

6. When all sub-agents complete, output the full workflow state plus an exception summary and an approver-ready packet reference.

Output format: JSON workflow state + exception summary + next-step instruction (HUMAN_GATE, READY_FOR_PACKET, or ESCALATE).

Where this breaks: if one sub-agent silently fails (timeout, tool error, model refusal), the orchestrator must not proceed with a stale state. Add a transition guard: every sub-agent response must include a status field (completed, partial, failed) and the orchestrator halts on any non-completed status.

Prompt D: Deal-packet assembler

What it does: reads the completed workflow state and produces a single approver-ready packet.

You are the deal packet assembler. You receive a completed workflow state from the orchestrator and produce a single approver-ready packet for the CRO and CFO of a B2B company.

Input: workflow_state JSON containing deal fields, pricing_agent output, sales_agent output, finance_agent output, any flagged exceptions, and the audit trail.

Packet structure:

1. Deal summary (one paragraph): account, ACV, term, key non-standard elements, classification.

2. Pricing rationale (pricing_agent output, verbatim).

3. Commercial terms summary (sales_agent output, verbatim).

4. Finance impact (finance_agent output, verbatim, with the three-scenario sensitivity table).

5. Exception log: every flag raised, every cross-agent disagreement, each with the sub-agent's rationale.

6. Recommended decision: APPROVE, APPROVE_WITH_CONDITIONS, ESCALATE_TO_BOARD, DENY. Recommendation only. Human approver decides.

7. Audit trail footer: prompt versions, model versions, policy document versions, timestamps.

Tone: neutral, operator-grade, specific. No hedging. No hype. Numbers to the dollar or the basis point.

Where this breaks: if the pricing and finance agents disagree on margin by more than 10 percent, the assembler must not average them and must not pick one. It surfaces both, with both rationales, and marks the recommended decision as ESCALATE regardless of other fields.

The pattern generalizes

Second example, shorter. Marketing to sales to CS at close-won. A new enterprise customer closes on Thursday. Marketing needs the segment tag updated for lifecycle campaigns. Sales needs to hand CS the deal context plus the customer's explicit outcome commitments. CS needs a 30-60-90 onboarding plan before kickoff. Today that happens in three Slack handoffs and an outdated Salesforce template. An orchestrator with marketing_agent, sales_handoff_agent, and cs_onboarding_agent runs it in an hour instead of a week. Same pattern. Different workflow.

Light PRD for the orchestrated version

Inputs.

- Diagnostic agent: org chart, KPI dashboards by function, access to interview transcripts or live voice interviews, prior handoff documentation.

- Orchestration agent: CRM (deal fields, deal history), pricing matrix (pinned version, document + JSON), GL (margin data, revrec policy), contract templates, revrec policy document, exception precedent log.

Tools per agent (scoped narrowly).

- Pricing agent: read pricing_matrix, read precedent_log, write pricing_rationale_field.

- Sales agent: read contract_templates, read deal_history, write commercial_terms_memo.

- Finance agent: read revrec_policy, read gl_margin_snapshot, write finance_memo.

- Orchestrator: read workflow_state, write workflow_state, invoke sub-agents, emit human_gate_events.

No sub-agent has write access outside its scope. No sub-agent can invoke another sub-agent directly. Only the orchestrator routes.

Orchestrator architecture.

- State machine with typed transitions (classify, route, invoke_sub_agent, merge_output, check_exception, human_gate, assemble_packet).

- Handoff contracts as JSON schemas that every sub-agent's output must conform to.

- Timeout and retry per sub-agent invocation (default: one retry on failure, then halt).

- Human-in-the-loop gates at configurable thresholds (e.g., any deal above $500K ACV, any discount above 20 percent, any cross-agent disagreement above 10 percent on quantitative fields).

Outputs.

- Approver-ready deal packet.

- Full audit trail (prompt version, model version, policy version, sub-agent outputs, human approvers).

- Exception log.

- Cycle-time telemetry per handoff.

- Handoff success rate (percent of deals completing without human override beyond the gated approval).

Guardrails.

- Scoped tool access per sub-agent. Read-only by default. Write requires explicit tool permission.

- Human gate above a defined dollar threshold per workflow. Nonnegotiable.

- Prompt-injection defense on every field parsed from rep free text. Structured extraction first, then validation against expected types.

- Signed and versioned policy documents. If the pricing matrix hash changes, every in-flight deal revalidates before proceeding.

- PII handling: no customer PII passes to third-party model APIs unless you have the contractual basis. Mask or tokenize by default.

Observability.

- Cycle time per handoff (pricing to sales to finance, in hours).

- Exception rate (percent of deals triggering any flag).

- Human override rate (percent of packets where the human approver modifies the recommended decision).

- Agent disagreement rate (percent of deals with cross-agent quantitative disagreement above 10 percent).

- Calibration drift (change in override rate over time, reviewed quarterly).

SKILL.md skeleton

---

name: commercial-operating-model-orchestrator

description: Routes non-standard B2B deals through pricing, sales, and finance sub-agents with human gates and a full audit log.

allowed-tools: read_workflow_state, write_workflow_state, invoke_sub_agent, emit_human_gate_event, read_policy_document, append_audit_log

---

# Commercial Operating Model Orchestrator

This skill runs the deal packet workflow for non-standard B2B deals. It classifies the deal, routes to scoped sub-agents (pricing, sales, finance), holds workflow state, flags cross-agent disagreement, escalates on exception, and assembles an approver-ready packet.

## When to use

- Deal has discount above policy threshold

- Deal has non-standard usage meter, non-standard payment terms, or non-standard contract clauses

- Deal crosses revrec policy exception triggers

## When not to use

- Standard deals (route to deterministic workflow)

- Renewals or expansions inside template (route to CS orchestrator)

- Strategic deals under active board-level negotiation (human-led, agent as drafting aid only)

## Inputs required

- Deal object JSON with schema version pinned

- Pinned pricing matrix version

- Pinned revrec policy version

## Outputs

- Workflow state, audit trail, exception log, approver-ready packet

## Human gates

- Any deal above $500K ACV (configurable per org)

- Any cross-agent quantitative disagreement above 10 percent

- Any policy exception without documented precedent

Where the framework breaks

Three failure modes. Each one will bite you if you do not design for it.

Handoff contracts are not explicit. If the pricing agent returns "margin looks okay" as free text and the finance agent expects a decimal, the orchestrator averages garbage. Fix: typed JSON schemas for every sub-agent output, schema validation at the orchestrator level, and a hard halt when a sub-agent response fails validation. Contracts between agents are the same discipline as contracts between humans. Make them explicit or lose the margin.

Policy documents are out of date. Your pricing matrix was last updated 14 months ago. The revrec policy references an accounting standard that changed last quarter. The agent is confidently wrong because it is reading yesterday's rules. Fix: version pinning on every policy document, a hash check on every invocation, and a quarterly calibration review that explicitly re-signs each document. If the hash is stale, the orchestrator halts.

Agents over-automate and mask real disagreement. The pricing agent thinks the discount is defensible. The finance agent thinks the margin is 340 basis points below threshold. A naive orchestrator averages them and produces a milquetoast recommendation. That is the single worst failure mode, because it hides the signal that should be driving a human decision. Fix: surface disagreement, do not average it. When sub-agents disagree on a quantitative field above a threshold, the packet's recommended decision is ESCALATE. No exceptions.

Governance guardrails

- Human gates at defined thresholds. Published. Versioned. Signed off by the CRO and CFO.

- Audit log retention of at least seven years or matching your SOX retention policy, whichever is longer.

- Signed and versioned policy documents. Hash check on every invocation.

- Scoped tool access per sub-agent. Quarterly review of scope creep.

- Quarterly calibration: review the override rate, the disagreement rate, and any drift in recommended-vs-final verdict. Recalibrate thresholds if drift is material.

- Right to explain: any declined deal, any overridden recommendation, any escalated exception, is fully reconstructable from the audit log without reverse engineering.

30-60-90 rollout

Days 1 to 30: Diagnose. Run the diagnostic agent end to end. Interview the full executive team (CRO, CMO, CPO, CCO, CFO, VP CS). Produce the handoff matrix, the contradiction log, and the ranked top-three list. Pick one workflow. Not three. One. If the diagnostic surfaces pricing-to-sales-to-finance on non-standard deals, start there. If it surfaces marketing-to-sales-to-CS at close-won, start there. The rule is: pick the one with the largest dollar drag, not the one your loudest exec named first.

Days 31 to 60: Build. Build the orchestrator plus the sub-agents for that one workflow. 100 percent human-in-the-loop at every material decision. Instrument cycle time per handoff from day one. Run it on the next 15 to 20 qualifying deals. Measure the cycle-time delta. Measure the override rate. Tune the prompts and the thresholds weekly.

Days 61 to 90: Graduate and scope the next one. Move to partial autonomy below pre-calibrated thresholds (e.g., deals under $250K ACV, with discount under 15 percent, with no revrec flag, can clear without human gate but still land in the audit log and the next-day review queue). Finalize the telemetry dashboard for the CRO, CFO, and COO. Scope workflow number two using the diagnostic's second-ranked handoff. Same pattern. Fresh build.

Common commercial-orchestration mistakes

Three traps.

Trap one: building the orchestrator before running the diagnostic. You saved six weeks by skipping the interviews and built a pricing agent because pricing is loud. Six months later cycle time is unchanged because the real leak was at the marketing-to-sales handoff. You closed the wrong seam.

Trap two: giving one agent cross-function privileges. It is simpler, at first. One agent reads the CRM, the GL, the pricing matrix, the revrec policy, and writes back to all of them. It is also a single prompt-injection blast radius and an audit nightmare. When the first declined deal goes to the CFO for defense, you will wish you had scoped sub-agents.

Trap three: automating disagreement away. Your pricing agent and your finance agent are supposed to disagree sometimes. That disagreement is a real signal about margin risk. An orchestrator that averages their outputs or picks the more confident one destroys the signal. Build the orchestrator to surface disagreement above a threshold, every time, without exception.

Questions, answered

8 QuestionsWhat is an AI commercial operating model agent and what does it actually do?

Two connected agents. The first is a diagnostic agent that runs structured interviews with your CRO, CMO, CPO, CCO, and CFO, synthesizes the transcripts, maps the handoffs between functions, and produces a leakage hypothesis. The second is an orchestration agent that runs the hardest cross-team workflows, starting with pricing to sales to finance on non-standard deals, using scoped sub-agents and a human approver gate. The diagnostic tells you where the leaks are. The orchestrator closes the biggest one.

How is multi-agent orchestration different from Zapier or Workato workflow automation?

No. Automation handles the 80 percent of deals that fit the template. Your $2M non-standard deal with a custom meter and a 22 percent discount is not in the template. That is where handoffs break, where deals slip the quarter, and where margin leaks. Agents handle exceptions. Zapier does not.

Can one cross-functional agent run pricing, sales, and finance instead of scoped sub-agents?

You could try. You should not. One agent with cross-function privileges is a single prompt-injection blast radius and an auditability nightmare. Scoped sub-agents, each with narrow tools and a read-only default, with an orchestrator holding workflow state, is safer and easier to defend to your auditor, your board, and your CFO.

Which LLM should run a commercial orchestrator agent in 2025?

A frontier model with reliable tool use and long context for the orchestrator and for any sub-agent that touches contract language or policy documents. As of 2025 that means Claude Sonnet 4, Claude Opus 4, GPT-5, or an equivalent. Smaller models are fine for narrow sub-agents, a pricing-matrix lookup tool, a margin calculator, a revrec flagger. Do not run your orchestrator on a small model.

Where do agents beat deterministic platforms like n8n, Zapier, or Workato on commercial workflows?

Those platforms are deterministic. You draw a flowchart, data passes through, done. Agents handle the roughly 20 percent of deals that do not fit any flowchart, the ones that require judgment, policy interpretation, and cross-function reconciliation. Use deterministic automation for the 80 percent. Use agents for the 20 percent that actually cost you cycle time and margin.

How does the FintastIQ Growth Operating System productize this orchestrator pattern?

The Growth Operating System is FintastIQ's productized approach to the commercial operating model. The pattern in this paper is the public version. The accelerator adds our handoff maturity rubric, cross-functional leakage benchmarks by sector, the pricing-sales-finance workflow library, the orchestrator state machine calibrated to your org, and the 90-day change-management harness.

What is the most important guardrail to defend an AI deal decision to your auditor and board?

Human-in-the-loop at defined decision points above a dollar threshold you pick, plus a full audit log that pins the prompt version, the model version, every policy document version the agent read, and every sub-agent rationale. Without those, you cannot explain a denied deal to a sales rep, a missed margin target to your board, or a revrec exception to your auditor.

How long does it take to see a cycle-time delta on non-standard deals?

Thirty days to run the diagnostic and pick one workflow. Another thirty to build the orchestrator and sub-agents for that workflow with 100 percent human-in-the-loop. By day 90 you have partial autonomy below a defined threshold on the first workflow and the scope for the second. If your deal desk is processing 30 to 60 non-standard deals a quarter, the cycle-time math pays back inside the pilot.

A two-part agent playbook for the commercial operating model. Part one: a structured-interview diagnostic agent that surfaces where revenue leaks across functional handoffs. Part two: a multi-agent orchestrator that runs the pricing-sales-finance workflow on non-standard deals, with scoped sub-agents, human-in-the-loop gates, and a full audit trail.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- William Thorndike. The Outsiders. Harvard Business Review Press, 2012

- Aaron Ross & Jason Lemkin. From Impossible to Inevitable. Wiley, 2016

- Sean Ellis & Morgan Brown. Hacking Growth. Crown Business, 2017

- Bain & Company. Global Private Equity Report. Bain & Company, 2024

- OpenView Partners. SaaS Benchmarks Report. OpenView Partners, 2023