Why 8 Right SKUs Beat 11 With 4 Sell-Through Misses

Operators ship. Leaders ship hypotheses. Discovery is the weekly discipline that stops you from scaling the wrong bet. Four obligations make it an intake gate, not a research function.

The Operator's Guide to Continuous Discovery

When did you last hear the words "I hate this part of my day" from a customer, unprompted?

If the answer is more than two weeks, your roadmap is running on founder intuition. That worked when you had 20 customers. It does not work when you have 20,000.

Operators ship. That is the superpower. It is also the trap. The same bias that gets the product to market at speed gets the wrong product to market at speed. Teresa Torres wrote the book on this for a reason. The companies that compound in consumer and hybrid categories are not the ones with the fastest roadmaps. They are the ones with the weekly obligation to a customer conversation that is allowed to change what ships next.

This paper is about that obligation. Not the research. The weekly obligation.

TL;DR.

- Operator-led companies ship hypotheses at speed and rarely check them. Continuous discovery is the weekly discipline that converts customer touchpoints into an intake gate that stops bad launches before the PO goes to the factory. Four obligations make it operational: a weekly touchpoint minimum, an opportunity solution tree, an assumption test, and a discovery-to-delivery handoff.

- Most product teams outside of software have no discovery function at all. They have merchandising calendars, trend reports, and the founder's instinct. The 30-60-90 sprint in this paper installs the cadence without adding headcount.

- This is not research. Research is a project. Discovery is a habit. The difference shows up in sell-through data 60 days after the launch date.

The core problem. Operators ship faster than they learn.

You run a P&L. You have a calendar. The calendar says 11 new SKUs next quarter. The buyers at your 220 wholesale accounts are already asking what is new. You have three choices. Ship what the design team drafts. Ship what the trend report says. Ship what the last quarter's best seller implies. None of those options includes a customer.

That is the default state of operator-led companies. The roadmap is a set of confident guesses made by smart people who have not talked to a customer this week.

Melissa Perri calls this the build trap. The organization measures itself by what ships, not by what the customer adopts. The metric that matters becomes velocity. The metric that should matter is the ratio of shipped-to-adopted. When those two numbers diverge, you get activity bias. The team looks productive. The P&L does not.

Meet Northline Outfitters. 140 people. $52M GMV. DTC outdoor apparel brand selling direct and into 220 specialty retailers. Product team ships 11 new SKUs per quarter. Last year, 4 of the 14 core SKU launches missed sell-through target by more than 30 percent. The CEO, Dana, is a former supply-chain operator. She ran logistics at a bigger outdoor brand for a decade. She did not come up through product. She is fast, decisive, and trusts her team.

The miss that cost the most. A technical shell called the Ridgecut, designed for shoulder-season hiking in the Pacific Northwest. The spec was beautiful. 2.5-layer waterproof, articulated elbows, a chest pocket sized for a smartphone plus a topo map. Price point $189. Sold in 178 of the 220 wholesale doors. Sell-through at week 10: 34 percent. Target: 70 percent. The brand had to take a $1.4M markdown to clear it.

What the team learned from the post-mortem. The core customer for a shoulder-season shell in that channel was already buying the competing brand's version at $165 and using it for three seasons. The $189 price was not the problem. The "three seasons" use case was. Ridgecut was spec'd as a shoulder-season piece. The customer was buying shoulder-season pieces to wear as a primary rain jacket from April to November. The chest pocket for the topo map was wrong. The customer was not reading maps. They were stashing a phone and a dog-treat pouch.

One conversation with a repeat customer in Portland would have caught that. Nobody at Northline had that conversation before the PO went to the factory.

That is the cost of shipping without discovery. Not one bad SKU. A structural blind spot that produces a 4-in-14 miss rate every year.

Exhibit: Opportunity solution tree

Exhibit: Opportunity solution tree

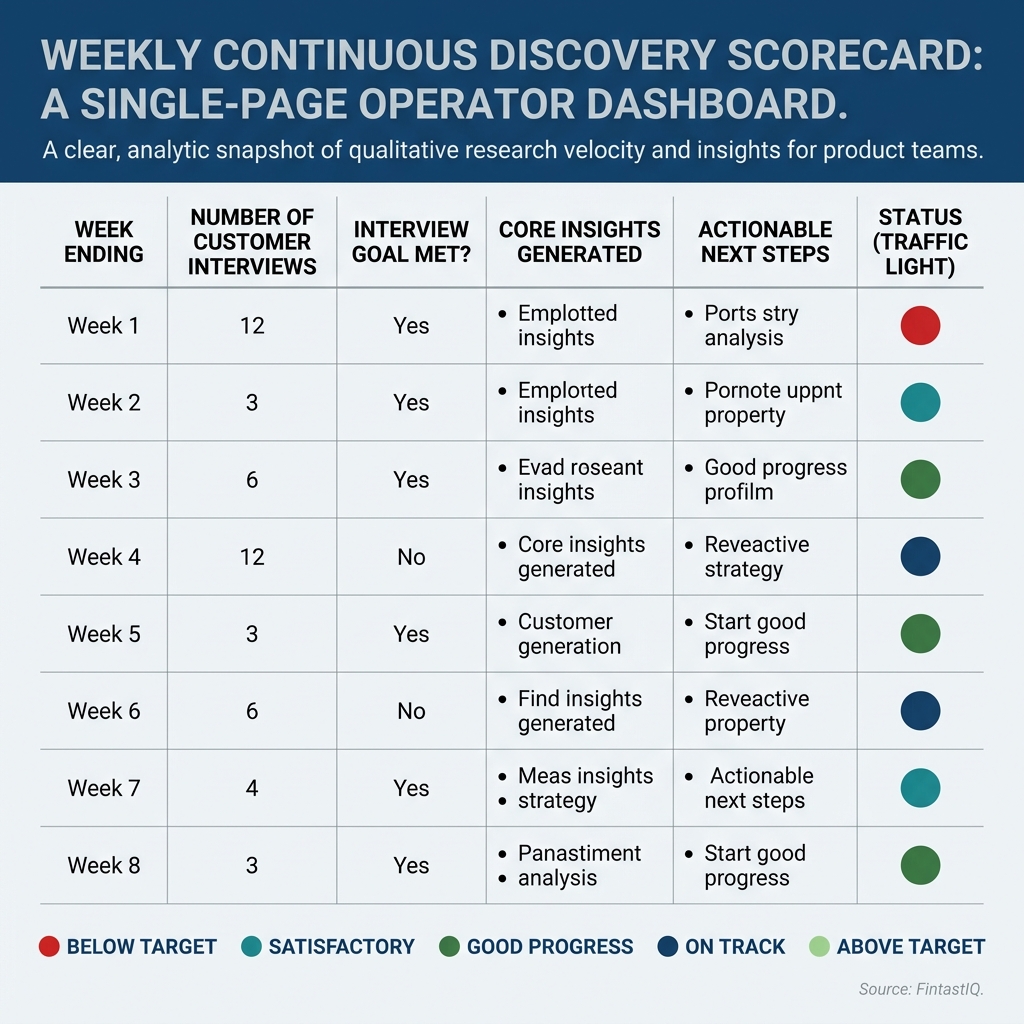

Exhibit: Weekly discovery scorecard

Exhibit: Weekly discovery scorecard

Four disciplines that make discovery an intake gate

Four disciplines. Each one answered weekly. Together they convert customer contact from a research project into a decision-making function that controls the intake queue.

Discipline 1. The weekly touchpoint minimum

The product trio talks to at least three customers every week. Not three conversations total across the company. Three conversations per product squad. Torres is strict on this for a reason. Pattern recognition requires repetition. One interview every two months is an anecdote. Three a week is a signal.

For Northline, the trio is the product director, the senior designer, and the merchandising planner. The operator (Dana) joins one of the three every two weeks. The interviews are 25 minutes. They happen by video. They are recorded. The questions are not "what do you want?" They are "walk me through the last time you picked a shell for a trip you cared about."

The output is not a report. The output is three quotes a week that the team agrees changed their mind about something. Zero quotes means the interviews were theater. The cadence is the cadence. Cancellations are only allowed when replaced, not skipped.

The best operators compete on discipline, not instinct. This is the discipline.

Discipline 2. The opportunity solution tree

Torres' tree is the artifact that holds the team honest. One desired outcome at the top. Underneath it, a branching set of opportunities (the customer problems, friction points, and unmet needs surfaced in the interviews). Underneath each opportunity, two or three candidate solutions. Underneath each solution, the assumptions that need to be true for the solution to work.

For the Ridgecut category at Northline, the desired outcome is "grow weatherproof shell revenue by 30 percent in the DTC channel over two seasons." The opportunities branched under it would have been "customers wear shoulder-season shells as primary rain jackets," "customers stash phone and dog gear, not maps," "customers wash their shells aggressively and the DWR degrades by season two." Each opportunity is a branch. Each branch has two or three solution candidates. Each candidate has an assumption list.

The tree does two things. It visualizes the hypotheses the team is running. It forces the team to choose which opportunities to pursue and which to park. Without the tree, the team runs 11 solutions simultaneously and none of them are tested against an explicit opportunity. With the tree, you can look at the board and see which branches are evidence-backed and which are wishful thinking.

Marty Cagan makes the same argument in INSPIRED and Empowered. Discovery is a distinct phase from delivery. You do not skip it. You do not merge it. You give it an artifact that survives the sprint review.

Discipline 3. The assumption test

An opportunity solution tree is a wall of hypotheses. Most of them are wrong. That is fine. The job is to figure out which ones are wrong before the factory PO goes out.

Eric Ries framed this in The Lean Startup as validated learning. The discipline is simple. For each solution on the tree, list the assumptions that must be true. Rank them by risk. Test the riskiest assumption first. The test is the smallest possible experiment that produces a useful signal.

For Northline's Ridgecut, the assumption "customers want a chest pocket sized for a smartphone plus a topo map" was load-bearing. It was never tested. A one-week test would have been to show five repeat customers a physical prototype with the pocket and ask them to fill it with what they carry on a day hike. Zero of them would have put a topo map in it. Four of them would have complained that the pocket was too shallow for a phone in a bulky case. The test would have cost two days. The markdown cost $1.4M.

Two rules on assumption tests. The test has to produce a decision, not a discussion. The test has to happen before the irreversible commitment (the PO, the long-lead-time fabric, the tooling). If the test cannot be designed to produce a decision by Friday, it is the wrong test.

Discipline 4. The discovery-to-delivery handoff

This is the discipline most teams skip, and it is the one that breaks the whole system when they do. Discovery produces opportunities, candidate solutions, and validated assumptions. Delivery executes the solution at spec. The handoff is where the evidence travels from one phase to the other. If the handoff is sloppy, delivery builds from memory and the insight vanishes.

The handoff artifact is a one-page brief per SKU. The job the product is hired for, stated in the customer's language. The alternative the customer is leaving behind, with the price they are paying for it. The three opportunities on the tree that this SKU addresses. The two assumptions that got validated. The one assumption that did not get validated but the team is betting on anyway (with the reasoning for the bet). The success metric at 8 weeks post-launch.

The brief is signed by the product director, the merchandising planner, and one frontline account rep who sells into the wholesale channel. Three signatures. No brief, no engineering. No brief, no PO.

Martina Lauchengco's argument in Loved applies here. Product marketing is the translation layer between discovery and the buyer. The one-page brief is also the positioning document. The language in the brief becomes the language on the hangtag, the PDP, the sell sheet. When the brief is vague, the positioning is vague. Confusion is the enemy of willingness to pay. The brief is where the clarity is forced.

Where this framework breaks

Three failure modes. Each one shows up quietly and costs real money.

Failure one. Sample bias. Your weekly interviews are with three customers who already love you. They are repeat buyers, Instagram followers, brand advocates. They tell you the shell is great and you should build more colors. What they do not tell you is why the 62 percent of visitors who hit your site never bought. Sample bias produces discovery that confirms whatever you already believed. The fix is the three-bucket rule. Every month, one third of your interviews are with current customers, one third with churned or lapsed customers, and one third with never-bought prospects who considered you. The never-bought bucket is the one that changes your mind. Without it, you are running a testimonial factory. Rob Fitzpatrick's The Mom Test is explicit on this. The interview has to surface friction, not flattery.

Failure two. Interview theater. The team runs the three interviews a week. The interviews are scheduled, recorded, transcribed, and summarized. Nobody reads the summaries. Nothing changes in the intake queue. The opportunity solution tree has not been updated in six weeks. The team is performing discovery without using it. The fix is a weekly 20-minute synthesis meeting where the trio pulls three quotes and asks "what on the tree does this change?" If the answer is "nothing" for two weeks running, the interviews are theater or the tree is dead. Either way you have a structural problem, not a data problem.

Failure three. The research-to-roadmap gap. Discovery runs. Delivery runs. The two teams live in different ceremonies, different tools, different rhythms. The discovery team produces insights. The delivery team produces SKUs. Nobody maintains the connective tissue. Six months in, the discovery team is demoralized because nothing they surface shows up in the line plan, and the delivery team is frustrated because the spec they inherit still has holes. The fix is the one-page brief acting as the hand-off artifact, enforced at the PO approval gate. If the brief is missing, the PO does not get signed. The CFO is the last line of defense here. The CFO does not sign a $400,000 fabric commitment for an SKU that does not have a brief. That rule, enforced once, rewires the whole system.

The 30-60-90 sprint for continuous discovery

Days 1 to 30. Install the weekly touchpoint cadence. The product trio books three interviews a week for four consecutive weeks. The operator (CEO, CPO, or head of merchandising) joins one interview every two weeks. The interviews follow the jobs framing. "Walk me through the last time you tried to do this thing." No leading questions. No feature pitches. Notes captured in a shared doc. A weekly 20-minute synthesis meeting on Friday. The output of month one is a habit, not a report. If you miss two weeks, restart the count. The cadence is the point.

Days 31 to 60. Build one opportunity solution tree for the next SKU launch. Pick the upcoming launch that carries the most sell-through risk (the one where a miss would cost you seven figures). Build the tree. Desired outcome at the top. Three to five opportunities beneath it, each sourced from at least two interviews. Two or three candidate solutions per opportunity. Assumption lists beneath the solutions. Test two assumptions per week for four weeks. Kill solutions that fail their riskiest assumption. Promote solutions that pass. At day 60, the tree has evidence behind every live branch and a documented kill list for the dead branches.

Days 61 to 90. Move one launch through the full gate end to end. Pick one SKU from the tested tree. Write the one-page brief. Get three signatures (product, merch, frontline sales). Submit the brief to delivery with the PO request. Measure sell-through at week 8 post-launch against the success metric in the brief. Publish the delta to the leadership team. If the delta is under 15 percent, the gate is calibrated. If the delta is over 15 percent, the brief is too generous on the assumption side and needs a tighter assumption test in the next cycle. The first gated launch is the proof. The tenth is the habit. The hundredth is the operating system.

FAQ

Is not discovery a fancier word for customer research? No. Research is a project. Discovery is a weekly obligation that changes what gets built. If the output does not alter the intake queue within two weeks, it is research dressed up as discovery.

I am the CEO. Do I have to run the interviews myself? Not all of them. Enough to stay calibrated. The product trio runs the weekly cadence. The operator joins one interview every two weeks. The moment you delegate fully, your pattern recognition decays and you start trusting summaries. Summaries hide the quotes that would have changed your mind.

We ship 11 SKUs a quarter. How do I slow down? You do not slow down. You reallocate. The hours currently spent in roadmap debate move into customer conversations. The debate gets shorter because the interviews produce decisions instead of opinions. Velocity is how fast the wrong bets get killed, not how many SKUs hit the line.

What if my customers cannot articulate what they want? Good. Jobs to be Done is built for that. You do not ask what they want. You ask about the last time they tried to do the thing. The specifics are the brief.

How does discovery connect to pricing? Discovery surfaces the alternative the customer is leaving behind. That alternative is your pricing anchor. Pricing is a signal before it is a number, and the signal comes from the interview, not the survey.

Does this work in a new category with no benchmarks? Yes, and it is the only thing that works. Willingness-to-pay research fails in new categories because the benchmark does not exist yet. Discovery replaces the benchmark with the cost of the current workaround and the language the customer uses to describe it.

How do I spot interview theater? Three tells. Notes read like testimonials. Sample is all friends and repeat buyers. Output is a slide, not a roadmap change. If any one shows up for two weeks running, you have theater.

What is the fastest way to start? The 30-60-90 above. Cadence, tree, gated launch. The muscle is 90 days. The habit is a year.

Ready to stop scaling the wrong bet?

Run the free assessment or book a consultation to apply this framework to your specific situation.

Questions, answered

8 QuestionsHow is continuous discovery different from traditional customer research?

No. Research is a project with a start date and an end date. Discovery is a weekly obligation that changes what gets built. The distinction matters because research outputs sit in a slide deck that the roadmap ignores. Discovery outputs change the next spec. If your discovery work does not alter the intake queue within two weeks, you are running research and calling it discovery.

Does a CEO actually have to run customer discovery interviews personally?

Not all of them, but you need to run enough to stay calibrated. Teresa Torres is firm on this. The product trio (PM, designer, engineer) runs the weekly cadence. The operator who owns the P&L runs one interview every two weeks minimum. The moment you delegate discovery fully, your pattern recognition decays and you start trusting secondhand summaries. Summaries hide the quotes that would have changed your mind.

How do you fit weekly discovery into a team shipping 11 SKUs a quarter?

You do not slow down. You reallocate. The four hours a week you currently spend in roadmap debate moves into customer interviews. The roadmap debate gets shorter because the interviews produce decisions instead of opinions. Velocity is a function of how fast the wrong bets get killed, not how many SKUs hit the line. A team that ships 8 right SKUs outperforms a team that ships 11 and misses sell-through on 4.

What do you do when your customers can't articulate what they want?

Good. That is the point. Jobs to be Done discovery is designed for customers who cannot articulate wants. Bob Moesta frames it as listening for the progress the customer is trying to make and the friction stopping them. You do not ask 'what do you want?' You ask 'walk me through the last time you tried to do this.' The answer is always specific. The specifics are the brief.

How does continuous discovery feed into willingness-to-pay and pricing decisions?

Discovery surfaces the alternative the customer is leaving behind. That alternative is your pricing anchor, not the survey. Pricing is a signal before it is a number. The signal comes from the interview. When you discover that your customer is spending $80 on a competitor plus $30 on adjacent accessories, your price ceiling is $110 minus the risk premium of switching, not whatever the conjoint says.

Does continuous discovery work in a new category with no pricing or product benchmarks?

Yes, and it is the only thing that works. Willingness-to-pay research fails in new categories because the benchmark does not exist yet. Discovery replaces the benchmark with the cost of the current workaround and the language the customer uses to describe the workaround. The language becomes your copy. The cost becomes your anchor.

How do you know your team is doing interview theater instead of real discovery?

Three tells. The interview notes read like a testimonial reel (all positive, no friction surfaced). The sample is three founders' friends and eight repeat customers (zero churned or never-bought). The output is a slide, not a change to the roadmap. If any one of those shows up for two weeks running, you have theater. The fix is in the paper.

What's the fastest 30-60-90 way to start continuous discovery from zero?

A 30-60-90 sprint. Days 1 to 30, install the weekly touchpoint cadence and run it for four weeks. Days 31 to 60, build one opportunity solution tree for the next SKU launch and test two assumptions per week. Days 61 to 90, move one launch through the full gate end to end and measure sell-through against forecast at week 8. The muscle builds in 90 days. The habit takes a year.

Operators ship. Leaders ship hypotheses. Discovery is the weekly discipline that stops you from scaling the wrong bet. Four obligations make it an intake gate, not a research function.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- Teresa Torres. Continuous Discovery Habits. Product Talk, 2021

- Marty Cagan. Inspired. Wiley, 2018

- Rob Fitzpatrick. The Mom Test. Createspace, 2013

- Clayton M. Christensen, Taddy Hall, Karen Dillon & David Duncan. Competing Against Luck. HarperBusiness, 2016

- Clayton M. Christensen, Scott Cook & Taddy Hall. Marketing Malpractice: The Cause and the Cure. Harvard Business Review, 2005