When Did You Last Kill a Feature? Your Pricing Page Knows

Most product teams keep features because removing them feels like admitting a miss. The portfolio rot compounds. Good operators build a pruning rhythm, and every feature faces an annual monetization review. The ones that cannot defend a job, a user, or a dollar get retired deliberately. Pricing maturity is measured by what you stop doing, not just what you start.

The Operator's Guide to Killing Features

Name the last feature you killed. When was that?

If the answer is "I cannot remember," your portfolio has rot you have not priced. Product teams ship at a steady rate and retire at roughly zero. The backlog accumulates. The pricing page accretes tiers nobody asked for. The onboarding flow adds steps that justify features the product manager wrote into a launch plan two years ago. Meanwhile your sales team sells around half the surface area of the product because they cannot remember what the other half does.

Shipping is a skill. Pruning is a different skill. Most teams have the first one and have never practiced the second.

TL;DR.

- Features accumulate as psychological debt. Keeping them feels neutral. Killing them feels like admitting a miss. The default is accumulation, and accumulation compounds into a product that costs more to maintain than it earns in pricing power.

- The discipline has four parts. An annual monetization review with a fixed calendar slot. A job-user-dollar test applied to every feature. A deprecation runway that retires features without triggering a revolt. An R&D reallocation commitment that gives the saved hours a named destination.

- Pricing maturity is measured by what you stop doing, not just what you start. The best operators compete on pruning discipline, not instinct.

- Where it breaks: politically protected features, analytics blindness, and sunsetting without communication discipline. Each failure mode has a specific fix.

The core problem: features as psychological debt

Retiring a feature feels like admitting the original decision was wrong. It was not wrong. It was right for that moment. The market moved. The segment shifted. The meter changed. The feature that mattered in year two is a distraction in year five. Holding on to it is not loyalty to the original thesis. It is avoidance of a conversation.

Melissa Perri frames this in Escaping the Build Trap. Teams that measure themselves on output ship features. Teams that measure themselves on outcomes prune features. The output metric rewards accumulation. The outcome metric rewards retirement when the feature stops producing the outcome. If your quarterly review celebrates "features shipped" and is silent on "features retired," your operating model is optimized for the wrong direction.

Meet Forum & Lodge. 80 people, $22M ARR, B2B2C booking platform used by 340 boutique hospitality operators running independent hotels in the 5-to-80-key range. The platform has 94 features, built over seven years, across channel manager integrations, direct-booking widgets, revenue management tooling, guest messaging, housekeeping workflows, and a loyalty engine. The CPO, Ana, had never retired a feature. Not once. The product had only ever grown.

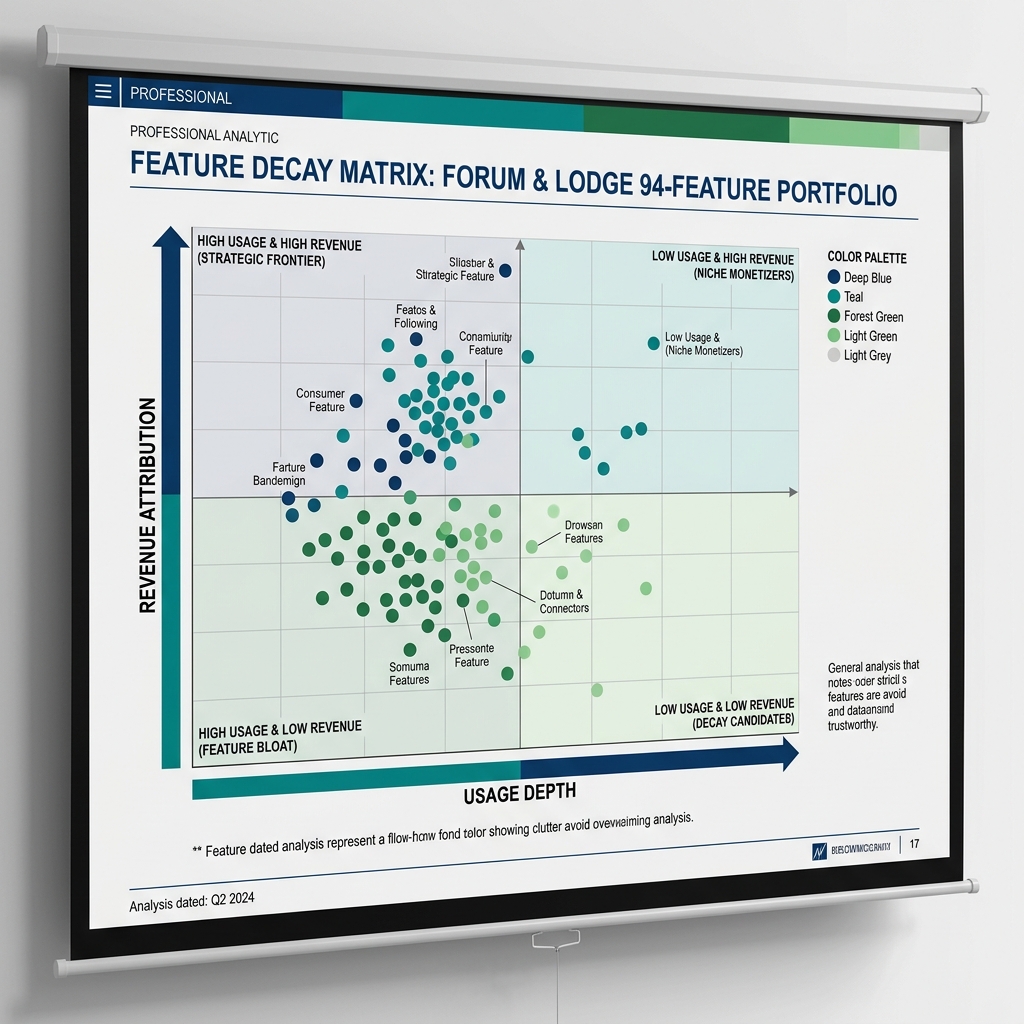

Usage analytics showed the cost. Of the 94 features, 38 were used by fewer than 5 percent of the 340 properties. The onboarding flow for a new property touched 27 of them. The sales team referenced 12 in the demo script. The top 15 features by usage generated 89 percent of the engagement. The bottom 38 generated less than 2 percent, and the maintenance, support tickets, and onboarding confusion they produced swamped the revenue they defended.

The cost was not just engineering time. It was pricing power. Forum & Lodge had three tiers. Property operators could not tell them apart because the feature list spilled across all three. Confusion is the enemy of willingness to pay. When your boutique hotel operator cannot remember which tier has the feature she actually uses, she defaults to the cheapest one. Feature accumulation without pruning discipline is a tax on every pricing conversation you have.

Clayton Christensen named this dynamic in The Innovator's Dilemma. Successful products accrete sustaining features until the core value proposition is buried. The failure is not a missing feature. The failure is a surplus of them. Every feature you keep past its usefulness is a feature your next customer has to learn, ignore, or work around.

Barry Schwartz called it the paradox of choice from the buyer side. Seth Godin called it clutter from the marketing side. Richard Rumelt, in Good Strategy Bad Strategy, called it the absence of a diagnosis. The diagnosis is simple. You do not have a feature problem. You have a pruning problem.

Exhibit: Feature decay matrix

Exhibit: Feature decay matrix

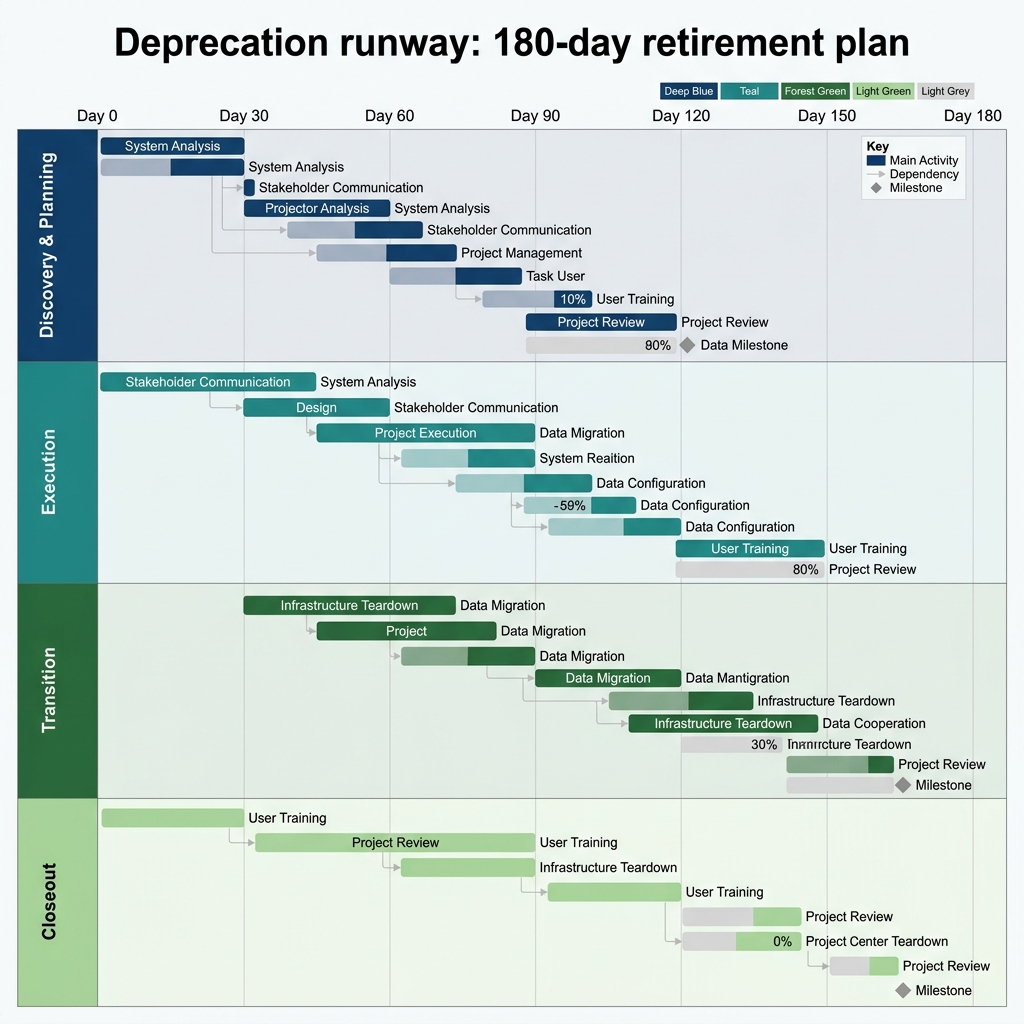

Exhibit: Deprecation runway Gantt

Exhibit: Deprecation runway Gantt

The four-part pruning framework

Part one: the annual monetization review

Every feature faces a review. Once a year. On the calendar. The calendar slot is the forcing function.

Event-driven pruning catches the obvious failures. A feature broke. A customer complained. A cost spike showed up in the cloud bill. Those are the worst 5 percent. The middle 30 percent (features that are fine, that nobody is mad about, that just quietly fail to earn their keep) never surface through incident reviews. They only surface through a calendar.

The structure is simple. Pick a month. In that month, the product team runs every feature in the portfolio through a standardized worksheet. One row per feature. Columns for 90-day active usage, revenue attribution, support ticket volume, engineering maintenance hours, and a three-point score on the job-user-dollar test. The output is a ranked list of prune candidates.

Basecamp writes about this cadence discipline in Shape Up and Getting Real. Fixed-time, variable-scope thinking applies to retirement as much as to building. You do not prune everything you could. You prune what fits in the six weeks you have allocated to it. The constraint produces better decisions than a list of every possible retirement ever attempted.

At Forum & Lodge, the first review took Ana's team 11 days. They scored all 94 features. The bottom 38 became candidates. They picked 14 to retire in the first cycle. The remaining 24 got a watch list label and came back in the next annual review. That is the rhythm. Retire some, watch some, defer some. The point is that every feature gets looked at, once a year, against the same rubric.

Part two: the job-user-dollar test

Every feature must defend three things. A job it does for the customer. A population of users who actually touch it. A dollar it generates or defends. One out of three is not enough. Two out of three makes it a watch list item. Three out of three means it stays. Zero or one makes it a prune candidate.

The job test is the hardest. You ask the question Clayton Christensen framed in Competing Against Luck. What is the customer hiring this feature to do, and what alternative are they leaving behind to use it. If you cannot name the job in one sentence, there is no job. Teresa Torres writes in Continuous Discovery Habits about weekly customer touchpoints. The touchpoints are the raw material for the job test. If no customer has mentioned the feature in discovery calls for 12 months, the job has evaporated.

The user test is telemetry. Active users per 90 days. Percentage of accounts touching the feature. Depth of usage (is it a one-click action or an embedded workflow). Marty Cagan in Transformed argues that the product operating model only works when product decisions are informed by real product data. The user test is the enforcement mechanism.

The dollar test is revenue attribution. Is the feature defending a specific segment of ARR. Is it feeding the meter. Is it a packaging leverage feature that pushes buyers into a higher tier. If you cannot name the dollar, the feature is a cost center masquerading as a product capability.

At Forum & Lodge, the job-user-dollar test surfaced a clear pattern. The loyalty engine, which Ana's team had launched three years earlier as a premium-tier differentiator, had a job (guest retention for independent hotels), had users (42 properties), but had no dollar. Zero properties had upgraded to the premium tier because of it. Zero had named it in a retention win. The feature scored two out of three. It went on the watch list. The next year it was retired. The premium tier got repackaged around a revenue management add-on that had passed all three tests.

Part three: the deprecation runway

Killing a feature without a communication plan is how you turn a prune into a churn event. The deprecation runway is the staged plan. Announce, degrade, replace, remove.

Announce. 90 to 180 days before removal, depending on integration depth. Email the affected accounts. In-product banner on the feature. Public changelog entry. Name the replacement, if any, and the migration path. Matthew Dixon in The Effortless Experience argues that the biggest driver of customer loyalty is the absence of effort. A surprise removal maximizes effort. A telegraphed removal minimizes it.

Degrade. Partway through the runway, start reducing investment visibly. No new enhancements. Bug fixes only on P0 issues. The feature stops moving. This signals to your team and your customers that the runway is real, not a bluff.

Replace. If there is a replacement (a newer feature, a third-party integration, a services-based workaround), make the migration path one-click where possible. Most customers will not migrate until they have to. The replacement must work before the removal.

Remove. On the announced date, pull the feature. Archive the code. Update the docs. Close the tickets. Publish a brief note confirming the removal. The removal itself should be anticlimactic by the time it happens.

Forum & Lodge ran a 120-day runway for the 14 features they retired in the first cycle. No account churned because of the removals. 11 of the 14 features had replacement paths (either a newer feature or a third-party integration). The three that did not got special handling. One had a phone call from Ana herself to the three accounts that relied on it. Support-ticket volume dropped 23 percent in the quarter after the runway completed, because the retired features had been generating a disproportionate share of the confusion. NPS rose 7 points over the following two quarters. Pruning, done well, made the product feel clearer to the customer, not smaller.

Part four: the R&D reallocation discipline

This is the step most teams skip. You retired the feature. You saved the hours. Where did the hours go?

If the answer is "into the general backlog," you have not pruned. You have redecorated. The hours dissolve into roadmap drift, and the next pruning cycle will be politically harder because nobody can point to what the last one produced.

Name the reallocation before the prune. If retiring 14 features saves 180 engineering days a quarter, commit those days to a specific initiative with a specific monetization thesis. The reallocation is the receipt. It is what makes the prune credible to the CRO, the CFO, and the board.

Eric Ries in The Lean Startup framed this as pivot, persevere, or kill. The kill decision is only coherent when paired with a decision about where the freed resources go. A kill without a reallocation is a rounding error on the roadmap.

At Forum & Lodge, the 180 engineering days saved from the first pruning cycle went into one named initiative. A direct-booking conversion optimization engine aimed at reducing dependence on third-party channel manager commissions. The initiative had a thesis, a meter, and a target segment (the 120 properties that produced 60 percent of their own bookings through their own website). The reallocation was visible. The board could see the trade. The next pruning cycle earned political capital the first one did not.

Where this framework breaks

Three failure modes. Each one costs real money.

Failure one: politically protected features. A feature becomes untouchable because a specific executive championed it, or because one loud customer built a workflow on it, or because the founder mentioned it in the Series B pitch. The feature fails the job-user-dollar test but survives every review. The fix is governance. The pruning decision is made by artifact, not by seniority. The CPO signs. The pricing leader validates the revenue math. One customer success rep signs the runway. No executive veto outside that circle. If the feature is so strategic that it deserves to survive a failed test, the executive championing it must produce a new job-user-dollar thesis in writing, attach their name to it, and accept the next review as the referendum. Make the protection visible. Most protected features do not survive that sunlight.

Failure two: analytics blindness. The team cannot answer the user or dollar question because the telemetry was never built. Every feature review dissolves into "we think some people use it" and defaults to keeping the feature. The fix is telemetry before launch. No feature ships without a usage event and a revenue-attribution hypothesis. For the existing portfolio, run a telemetry sprint before the first review. Two weeks of instrumentation is cheaper than another year of accumulation. If you cannot measure it, you cannot prune it, and if you cannot prune it you will never stop shipping against it.

Failure three: sunsetting without communication discipline. The runway gets compressed or skipped. The team removes a feature with 14 days notice. Three power users post angrily on a public forum. Support is underwater for a month. The executive team decides pruning is too risky and the next cycle gets shelved. The fix is the runway template. 90 to 180 days, fixed stages, pre-drafted communication. Price increases fail because of communication, not the price. Deprecations fail the same way. The feature removal is a price increase on the perceived value of the product if you communicate it badly, and a gift of clarity if you communicate it well. Same removal. Different outcome.

The 30-60-90 sprint to build the pruning rhythm

Days 1 to 30. Score the portfolio. Pull 90-day usage data on every feature. Score the bottom quartile on the job-user-dollar test. Expect 20 to 40 percent of features to fail two or more of the three tests. Publish the ranked list to the product leadership team and the CRO. Name the top five candidates for retirement in the first cycle.

Days 31 to 60. Draft the runway and the reallocation. For each of the five candidates, produce a one-page deprecation plan. Announce date. Degrade date. Replacement (if any). Removal date. Named engineering reallocation for the hours saved. Walk the plan through customer success and sales. Adjust for affected accounts. Get sign-off from the CPO, the pricing leader, and one frontline CS rep.

Days 61 to 90. Ship the first retirement end to end. Send the announce communication. Start the in-product banner. Pause new investment on the deprecated feature. Monitor support tickets. Track affected account engagement. On day 90, publish the results. Accounts affected. Churn attributable. Hours freed. Initiative funded. That published result is the artifact that earns the right to run the next cycle.

FAQ

How often should we run a monetization review on existing features? Once a year is the floor. Twice a year if your R&D spend is above 15 percent of revenue. The review is calendar-driven, not event-driven. Event-driven pruning catches the worst 5 percent. The calendar catches the quiet middle 30 percent, which is where the real leakage sits.

What is the job-user-dollar test and how do we score it? Three questions. What job does the feature do. How many users touch it in 90 days. How much revenue is it generating or defending. Three-point scale each (strong, weak, absent). Two or more absent scores makes it a prune candidate. The test takes 10 minutes per feature with the usage data in hand.

What is a deprecation runway and why do we need one? Announce, degrade, replace, remove. Typical length 90 to 180 days. Without a runway, retirement is a surprise tax on the customer. With a runway, retirement reads as product maturity. Same removal. Different customer experience.

Who owns the prune decision? The CPO is accountable. The PM proposes. The pricing leader validates revenue math. One customer success rep signs the runway. No single-owner model works, because every function defends its own incentive. The artifact forces the trade.

What if the feature is used by one big customer? Name it. It is a custom feature pretending to be a product feature. Either move it into a services contract so the cost is priced in, or build a migration path and sunset it at renewal. Do not let one account subsidize a feature that pollutes the roadmap for everyone else.

How do we reallocate the hours we save? Name the reallocation before the prune. If you save 180 engineering days a quarter, commit them to a named initiative in the same quarter. Hours absorbed into general backlog evaporate. Hours named against a specific initiative become the receipt the prune earned.

Does this apply to B2C? Yes. Lighter runway (30 to 60 days for consumer products instead of 90 to 180). Same principle. Every surface costs you. Unused surfaces cost you more than unused code.

What is the fastest way to start? The 30-60-90 above. Score the portfolio. Draft the runway and reallocation. Ship the first retirement end to end. The muscle is 90 days. The rhythm is annual.

Ready to stop letting the portfolio run you?

Run the free assessment or book a consultation to apply this framework to your specific situation.

Questions, answered

8 QuestionsHow often should a product team run a feature monetization review?

Once a year is the floor. Twice a year is the target if your R&D spend is more than 15 percent of revenue. The review is calendar-driven, not event-driven. If you only prune when a feature is obviously broken, you only catch the worst 5 percent. The middle 30 percent (features that are fine but unloved) is where the real leakage sits, and those never show up in an incident review.

What is the job-user-dollar test for deciding which features to retire?

For every feature, answer three questions. What job does it do that the customer cannot get somewhere else. How many users touch it in a 90-day window. How much revenue is it defending or generating. Score each on a simple three-point scale (strong, weak, absent). A feature with two or more absent scores is a prune candidate. The test takes 10 minutes per feature with usage data in hand.

What is a feature deprecation runway and how long should it run?

A deprecation runway is the staged communication and removal plan you run for every feature you retire. Announce, degrade, replace, remove. Typical runway is 90 to 180 days depending on how deep the integration goes. Without a runway, retirement looks like a surprise tax to the customer, and you get churn you did not price in. With a runway, retirement looks like product maturity.

Who owns the decision to kill a feature: CPO, PM, sales, or finance?

The CPO is accountable. The PM for the surface area proposes. The pricing leader validates the revenue math. One frontline customer success rep signs off on the runway. No single owner works, because every function optimizes for its own incentive. Sales defends features their reps use to close. Engineering defends features they are proud of. Customer success defends features one loud account uses. The artifact forces the tradeoff.

What do you do when a feature is used by one big customer and almost no one else?

Name it explicitly. This is a custom feature pretending to be a product feature. Two options. Move it into a services contract with that customer so the cost is priced in, or build a migration path off it and sunset it along with the renewal. Do not let one account subsidize a feature that pollutes the roadmap for everyone else. That is feature hostage taking, and it compounds every year you tolerate it.

How do you reallocate the engineering hours saved from killing features?

Name the reallocation before the prune. If you retire 10 features and save 180 engineering days a quarter, commit those days to a named initiative in the same quarter. If the hours get absorbed into general roadmap drift, the savings evaporate and the next pruning cycle gets harder to defend politically. The prune is only credible when the reallocation is visible.

Does feature pruning apply to B2C or consumer subscription products?

Yes, with a lighter deprecation runway. Consumer products typically need 30 to 60 days of in-product communication, not 180. The principle is identical. Every surface costs you something, and unused surfaces cost you more than unused code. Signal noise, support load, and onboarding complexity all compound with feature count regardless of who the buyer is.

What is the fastest 30-60-90 way to start a feature pruning rhythm?

A 30-60-90 sprint. Days 1 to 30 pull usage data on every feature and score the bottom quartile on the job-user-dollar test. Days 31 to 60 pick five features to retire, draft the deprecation runway, and name the R&D reallocation. Days 61 to 90 ship the first retirement end to end and publish the results to the board. The muscle is 90 days. The rhythm is annual.

Most product teams keep features because removing them feels like admitting a miss. The portfolio rot compounds. Good operators build a pruning rhythm, and every feature faces an annual monetization review. The ones that cannot defend a job, a user, or a dollar get retired deliberately. Pricing maturity is measured by what you stop doing, not just what you start.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- Marty Cagan. Inspired. Wiley, 2018

- Eric Ries. The Lean Startup. Crown Business, 2011

- Teresa Torres. Continuous Discovery Habits. Product Talk, 2021

- Anthony Ulwick. Jobs to Be Done. Idea Bite Press, 2016

- Clayton M. Christensen, Scott Cook & Taddy Hall. Marketing Malpractice: The Cause and the Cure. Harvard Business Review, 2005