Legal Says It Stopped Being Defensible. Ops Says It's Faster Than Ever.

Governing a product roadmap when two or more buyers inside the same account have different jobs and different economic power. A four-part framework for mapping both buyers, scoring every item against both value matrices, sequencing by unblock-value, and publishing to each buyer in their own language. Practical sprints, failure modes, and the uncomfortable truth operators rarely admit.

The Operator's Guide to Roadmap Governance for Multi-Buyer Products

Your best account just churned. The Head of Legal said the product stopped being defensible. The Head of Ops said it had never been faster. Both were telling the truth. Your roadmap had been optimizing one of them and starving the other for three quarters.

TL;DR

- Multi-buyer products fail quietly. One buyer votes with feature requests, the other votes with renewal decisions, and a single-score backlog cannot see both.

- The fix is governance, not more data. Score every roadmap item against both buyer-value matrices, then sequence by unblock-value at the handoff.

- Publish the roadmap in each buyer's language. Same sequence, two framings. Sales stops translating in the room.

- Packaging follows governance. Legal-defensibility features belong in a Legal tier; workflow-speed features belong in an Ops tier or add-on.

- The most-requested feature is often the worst next build. Discipline beats instinct when two buyers disagree.

- Expect deal-cycle gains in quarter one and renewal gains by quarter three. Measure both.

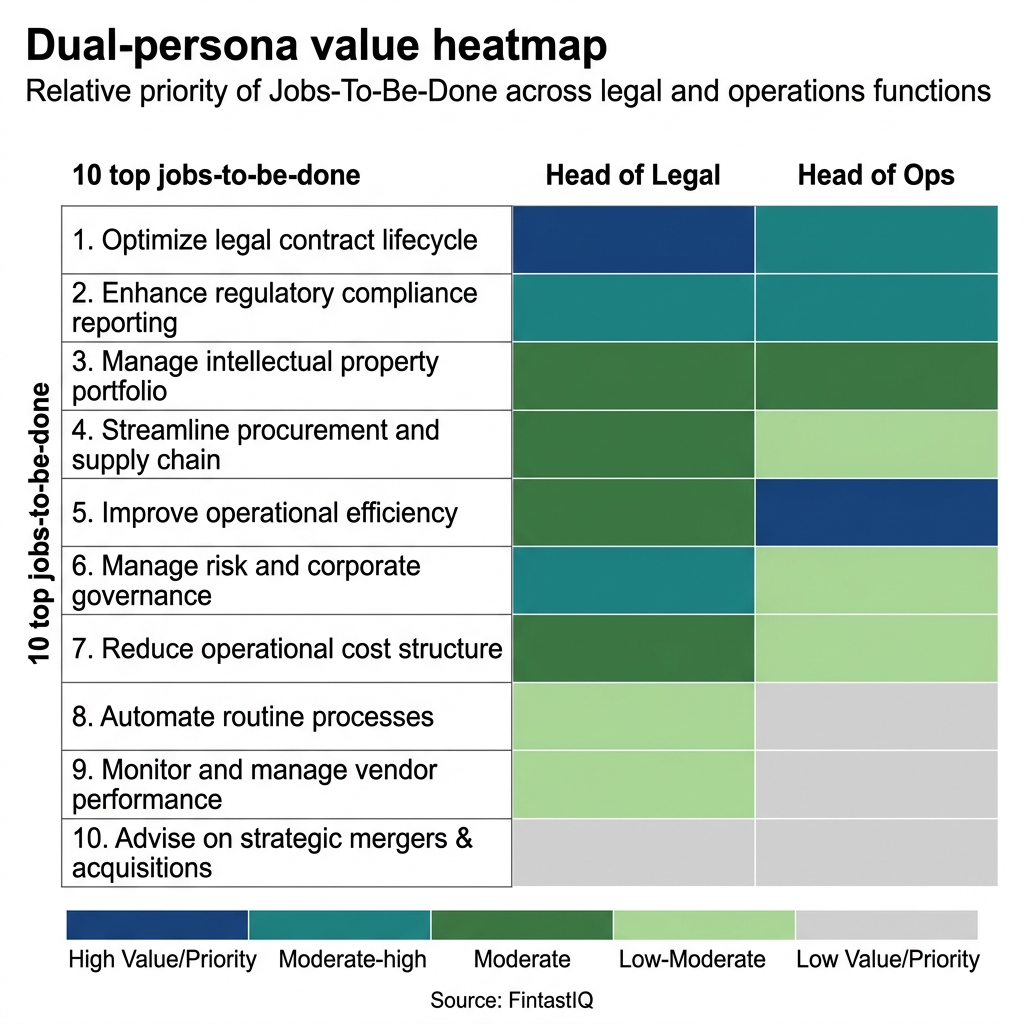

Exhibit: Dual-persona value heatmap

Exhibit: Dual-persona value heatmap

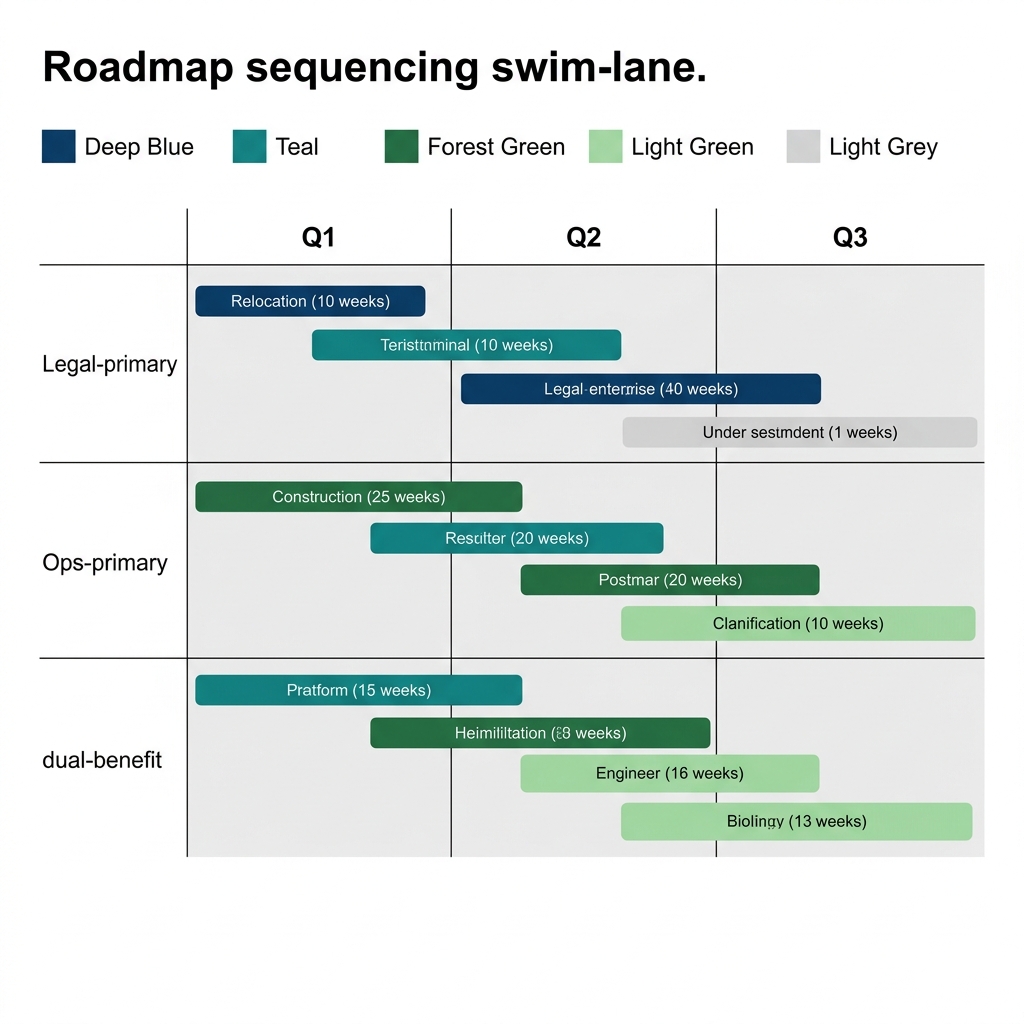

Exhibit: Roadmap sequencing swim-lane

Exhibit: Roadmap sequencing swim-lane

The core problem: your backlog cannot see both buyers

Harbor Compliance Labs is a regtech platform for mid-market financial services firms. 180 people, $38M ARR, 220 customer accounts. Mateo, the CPO, ran a roadmap review every quarter with engineering and sales and produced what looked like a reasonable priority order.

The tool had two co-equal buyers in every account. The Head of Legal signed the contract and owned the audit trail. The Head of Ops ran the daily workflow and owned integration speed. When Mateo pulled the feature-request data, 62% of requests came from Ops. So Ops shaped the backlog. Four sprints in a row shipped integration improvements, API reliability work, and UI polish.

Then the renewal numbers moved. 78% of renewal-blocker feedback came from Legal: missing audit fields, soft evidence trails, weak permission scopes. Legal was not filing tickets. Legal was quietly telling procurement the product was no longer defensible at audit. 31% of new deals were stalling at the Legal-Ops handoff, the moment Ops loved the demo and Legal asked the one question the product could not answer.

The backlog had 47 open items. Scored by request volume, most of them served Ops. Scored by renewal risk, most of the urgent ones served Legal. No single RICE score saw both views at once. The product was being built for whoever wrote the most tickets, which was the buyer with the shorter tenure: 9 months for Head of Ops against 18 months for Head of Legal. Ops turnover produced request volume. Legal tenure produced renewal quietude. The data was lying by omission.

This is the multi-buyer failure mode. Governance has to see it before it shows up in revenue.

Harbor is not unusual. The same shape shows up in insurtech, where a Chief Underwriting Officer signs but claims-ops runs the daily tool. It shows up in supply-chain platforms, where a VP of Procurement writes the check but plant-floor operators submit the tickets. It shows up in any B2B2B distribution business where a channel partner carries one set of jobs and the end-customer carries another. The pattern is the same: volume from one persona, renewal gravity from another, and a single-score backlog that cannot resolve the asymmetry.

Part one: map both buyers and their jobs separately

Start with buyer-value maps. One per persona. Do not merge them early.

For Harbor Compliance Labs, Mateo ran two discovery rounds inside eight accounts. The Legal map produced five jobs in priority order: defend an audit, produce a risk register on demand, trace a decision back to its approver, evidence control coverage to regulators, and reduce outside-counsel spend. The Ops map produced a different five: reduce manual handoffs, integrate with the case management tool, cut error rates on high-volume workflows, onboard new analysts quickly, and meet SLA on time-to-resolution.

Notice what does not overlap. Legal's top job is defensibility, which is a state of readiness. Ops's top job is throughput, which is a rate of flow. These are different ontologies. If you collapse them into one backlog at this stage, you will lose the asymmetry that makes governance work.

For each job, rate three things on a one-to-five scale: importance to the buyer, current product performance, and willingness to pay more for improvement. That last column is the one most teams skip. It is the one that tells you where packaging lives.

The Harbor Compliance Labs Legal map showed high importance and low performance on audit-trace. The willingness to pay was a five. The Ops map showed high importance and medium performance on case-management integration, with willingness to pay at a three. Same product, two different economic pictures. If you only run one map, you build the three, not the five.

The other output of this step is tenure-adjusted weighting. Legal at Harbor averaged 18 months in role. Ops averaged 9. Long-tenure buyers generate less ticket volume per month but carry more renewal weight per decision. Weight your request data by tenure before you believe it.

Two more inputs belong on each map. Escalation path: what happens inside the buyer's company when the product fails at their job. For Legal, a product failure reaches the audit committee. For Ops, it reaches a line manager and a queue. The severity of the escalation path is a proxy for renewal risk. Second input: alternative behavior. What does each buyer do today when your product does not solve the job. Legal at Harbor was quietly routing sensitive reviews through a second tool. Ops was building spreadsheets around the integration gaps. Map the workarounds. They tell you what the buyer pays for in time, headcount, or vendor spend, and that number is the ceiling on your willingness-to-pay column.

Part two: score every roadmap item against both buyer-value matrices

Now take the backlog. For Harbor Compliance Labs, that was 47 items. Each item gets two scores, one per buyer. A feature that scores high on both is rare and valuable. A feature that scores high on one and near zero on the other is the governance question. A feature that scores medium on both is usually a trap: generically useful, specifically urgent to no one.

Mateo's team scored all 47 against both maps. The distribution told a story. 19 items scored high for Ops and low for Legal. 11 scored high for Legal and low for Ops. 9 scored medium for both. 8 scored high for both. Only those 8 were obvious builds.

The governance work is the other 39. The scoring forces a conversation the team had been avoiding. Which buyer's low scores do you accept, and why? Accept Legal's low score on an Ops-heavy item only if it does not worsen defensibility. Accept Ops's low score on a Legal-heavy item only if it does not slow the workflow below a visible threshold. Write down the acceptance criterion. That written criterion is the artifact that survives leadership changes, sales-driven escalations, and quarterly panic.

Two patterns emerged at Harbor. First, the medium-medium items were almost all backlog entropy: features that had survived four planning rounds because no one felt strongly enough to kill them. Mateo killed 11 of them in one meeting. Pricing maturity is measured by what you stop doing, and the same logic applies to product. Second, several items that had been ranked high on request volume dropped sharply once they were scored for Legal impact. The loudest requests were frequently the worst next builds because they pulled the product away from defensibility without meaningfully improving throughput.

The scoring conversation has to happen with both buyers in the same room at least once per quarter. Not separately. The Harbor team tried one-on-one reviews for the first cycle and the scores drifted. Ops buyers scored Legal-heavy features low on relevance because they did not see the audit exposure. Legal buyers scored Ops-heavy features low because they did not see the SLA pressure. Putting both in the same conversation raised each persona's baseline score for the other persona's priorities, not because of politeness but because of new information. That joint calibration became a standing ritual.

Part three: sequence by unblock-value, not loudest-voice

Scoring tells you what is worth doing. Sequencing tells you what to do next. In a multi-buyer product, the sequencing rule is not highest-score-first. It is highest-unblock-value-first.

Unblock-value is the dollar amount of pipeline or renewal that is currently stalled because a specific item does not exist. At Harbor Compliance Labs, the team pulled Salesforce and ran a simple query: for every deal that had stalled in the last two quarters, which missing capability did sales cite? The answer was clustered. Three items accounted for 31% of stalled pipeline. All three were Legal-side: audit-export format, permission-scope granularity, and immutable decision logging. Not one had been in the top ten by request volume.

The team re-sequenced 14 items. The three unblock-value items jumped to the front. Six Ops items that had been queued for the current sprint moved to the next quarter. Two items got cut entirely. Three Legal items that had been low-urgency got grouped into a single defensibility release.

Sequencing also surfaces dependencies. Some Ops improvements only deliver value once a Legal feature exists, because Legal's approval gate is the real constraint on the workflow. Sequencing Ops-first in that case produces work that sits unused. The dual-buyer view makes the dependency visible. The single-score view hides it.

One caution: unblock-value is a leading tool, not a ceiling. If you sequence only by unblock-value, you will under-invest in the second buyer's base experience and eventually produce the opposite problem. Cap the unblock-value share of the roadmap at roughly 40% of engineering capacity per quarter. The rest goes to durable buyer-value, technical debt, and discovery. Harbor Compliance Labs held to that cap after the first correction and it held.

Sequencing also forces a pricing question. If the three unblock-value items serve only Legal, and Legal is paying for a tier that already includes defensibility, you are doing renewal-protection work that does not expand revenue. If the three items serve a buyer who sits in a lower tier, the work creates a packaging lift: the new capability belongs in a higher bundle that Legal can now be moved into. Harbor discovered on the second re-sequencing pass that two of the three Legal items were tier-expanding. The work was repriced at the next renewal and contributed directly to the NRR lift. Packaging beats pricing, and sequencing is where packaging gets decided in practice, not in a pricing meeting six months later.

Part four: publish roadmap commitments to both buyers in their own language

Same roadmap, two framings. Not two roadmaps. That is the trap.

For Legal, the framing is risk-and-defensibility. Each quarter's release notes lead with control coverage, audit capability, and evidence integrity. The language is formal. The artifact looks like a governance update, not a changelog.

For Ops, the framing is speed-and-flow. The same release notes lead with workflow reduction, integration coverage, and SLA impact. The language is operational. The artifact looks like a shipped-features summary with measured throughput gains.

Mateo's team produced both documents per quarter. The underlying sequence was identical. The commentary was not. Sales stopped ad-libbing translations in the room. The customer-success team had two scripts, one per buyer, both tied to the same ground truth.

The signal that the governance was working: NPS lifted +11 for Legal and +6 for Ops in two quarters. Deal cycle shortened 23% because the Legal-Ops handoff stopped producing silent objections. $4.2M ARR that had been stalled inside the 31% handoff-stuck cohort unblocked in the first two quarters. The product did not get dramatically better. The story about the product got coherent.

Where it breaks: three failure modes

The Tenure Illusion. You weight requests by volume. The buyer with more turnover generates more requests. You build for turnover. The buyer with stability generates silent renewal risk you cannot see. Fix: weight by tenure and by renewal-stage feedback, not by ticket count.

The False Consensus. A feature scores medium-high on both maps and everyone agrees to build it. Nobody is excited. It gets built, it ships, and neither buyer references it in the renewal conversation. You just spent a sprint on roadmap entropy. Fix: require at least one buyer to rate the item a four or five on willingness to pay. Consensus is not a signal of value.

The Language Collapse. Engineering gets tired of translating and publishes a single technical changelog. Legal reads it and cannot find their work. Ops reads it and cannot find theirs. Both feel under-served despite a roadmap that was serving both. Fix: keep the two framings. The cost of maintaining them is lower than the cost of two disappointed buyers.

30-60-90 sprint

Days 1 to 30. Run dual buyer-value discovery on six to ten accounts. Produce two maps. Score the existing backlog against both. Identify the medium-medium items and prepare to kill at least a third of them. Pull stalled-deal data and compute unblock-value per item.

Days 31 to 60. Re-sequence the roadmap. Cap unblock-value at 40% of capacity. Cut ruthlessly on entropy items. Draft the two framing documents for the current quarter's release. Brief sales on the dual narrative. Brief customer success on the two-script model.

Days 61 to 90. Ship the first re-sequenced release. Publish both framing documents. Measure three things: deal-cycle time, handoff-stall rate, and the tenure-weighted ratio of blocker feedback across the two buyers. Run a governance retro at day 90 and adjust the scoring weights.

Frequently Asked Questions

What makes a product "multi-buyer" and why does that change roadmap governance?

A multi-buyer product has two or more people inside the same account with distinct jobs, distinct success metrics, and overlapping or conflicting feature preferences. Governance changes because a single priority score stops working. You need a shared sequence that honors both buyer-value curves without averaging them into mush. When one buyer signs and another uses, you are governing two purchase decisions and two renewal decisions at once. The roadmap has to visibly serve both or deals stall at handoff.

How is this different from standard RICE or weighted scoring?

RICE gives you one score per item. In a dual-buyer product, a feature can score high for the Head of Ops, near zero for the Head of Legal, and still be the wrong next build because it widens the gap between the two. Our framework scores items twice, then runs a sequencing check that looks for unblock-value at the Legal-Ops handoff. You are not maximizing throughput. You are minimizing the number of deals that stall because one buyer cannot defend the product to the other.

We are B2B SaaS and our buyer is obviously the CFO. Does this apply?

If only one person signs, uses, and renews, you do not have a multi-buyer product. Most products we see have at least a soft second buyer: a functional lead, a security reviewer, a procurement partner, or a downstream consumer of the output. Apply the framework if feature requests consistently come from one persona while renewal objections come from another. That asymmetry is the tell. If both signals come from one person, keep your current process.

How do you keep engineers from being whiplashed by two buyer backlogs?

You publish one sequenced roadmap. The scoring happens upstream. Engineering sees a single ordered list with clear jobs-to-be-done, not two competing lists. What changes is the framing document: each item carries a buyer-impact tag and a sequencing rationale so engineering knows why an item ranked where it did. Discipline lives in the governance layer. Execution stays linear. The moment two buyer backlogs reach engineering unreconciled, you have already lost the benefit of the framework.

What if the two buyers actively disagree on a feature?

That is the point of governance. Disagreement is information. You ask which buyer carries the contract-signing risk, which carries the daily workflow risk, and which carries the renewal risk. Then you sequence the item either now, later, or never based on the asymmetric cost of being wrong. Document the call and share it with both buyers. Silent disagreement is what erodes trust. Explicit trade-offs, explained in each buyer's language, usually hold even when one buyer loses the round.

How does pricing and packaging fit this?

Packaging beats pricing here. If your Legal buyer needs audit defensibility and your Ops buyer needs workflow speed, those are not the same tier of the product. Bundle the audit-defensibility set for the Legal persona into a tier that can be sold and defended as such. Bundle the workflow-speed set for Ops into its own tier or add-on. Confusion is the enemy of willingness to pay. A roadmap that maps cleanly to bundles removes that confusion and gives sales two distinct stories to tell, one per buyer.

What metric tells us the governance is working?

Three signals. First, the share of deals stalling at the buyer-handoff should fall quarter over quarter. Second, net revenue retention should rise without churn masking it. Third, qualitative: a single item from the roadmap should be defensible in both buyers' languages without translation gymnastics. Leading indicators include cycle time from demo to signed contract and the ratio of renewal-blocker feedback across the two personas. If one buyer's blockers dominate six months in, the governance is drifting.

How long before we see results?

Two quarters is realistic for a mid-market product. Month one is discovery and buyer-value mapping. Month two is re-sequencing and publishing. Months three through six is the first full renewal cohort passing through the new sequence. You will see deal-cycle shifts earlier, often inside the first quarter, because sales gets a coherent story. Renewal lift takes longer because the first cohort under the new roadmap has to reach its anniversary. Do not measure renewal impact before month six.

Run the free assessment or book a consultation to apply this framework to your specific situation.

Questions, answered

8 QuestionsWhat makes a B2B product multi-buyer and why does that change roadmap governance?

A multi-buyer product has two or more people inside the same account with distinct jobs, distinct success metrics, and overlapping or conflicting feature preferences. Governance changes because a single priority score stops working. You need a shared sequence that honors both buyer-value curves without averaging them into mush. When one buyer signs and another uses, you are governing two purchase decisions and two renewal decisions at once. The roadmap has to visibly serve both or deals stall at handoff.

How is dual-buyer roadmap scoring different from standard RICE or weighted scoring?

RICE gives you one score per item. In a dual-buyer product, a feature can score high for the Head of Ops, near zero for the Head of Legal, and still be the wrong next build because it widens the gap between the two. Our framework scores items twice, then runs a sequencing check that looks for unblock-value at the Legal-Ops handoff. You are not maximizing throughput. You are minimizing the number of deals that stall because one buyer cannot defend the product to the other.

Does dual-buyer roadmap governance apply if our economic buyer is obviously the CFO?

If only one person signs, uses, and renews, you do not have a multi-buyer product. Most products we see have at least a soft second buyer: a functional lead, a security reviewer, a procurement partner, or a downstream consumer of the output. Apply the framework if feature requests consistently come from one persona while renewal objections come from another. That asymmetry is the tell. If both signals come from one person, keep your current process.

How do you keep engineering from being whiplashed by two competing buyer backlogs?

You publish one sequenced roadmap. The scoring happens upstream. Engineering sees a single ordered list with clear jobs-to-be-done, not two competing lists. What changes is the framing document: each item carries a buyer-impact tag and a sequencing rationale so engineering knows why an item ranked where it did. Discipline lives in the governance layer. Execution stays linear. The moment two buyer backlogs reach engineering unreconciled, you have already lost the benefit of the framework.

What do you do when two buyers in the same account actively disagree on a feature?

That is the point of governance. Disagreement is information. You ask which buyer carries the contract-signing risk, which carries the daily workflow risk, and which carries the renewal risk. Then you sequence the item either now, later, or never based on the asymmetric cost of being wrong. Document the call and share it with both buyers. Silent disagreement is what erodes trust. Explicit trade-offs, explained in each buyer's language, usually hold even when one buyer loses the round.

How do pricing and packaging fit dual-buyer roadmap governance?

Packaging beats pricing here. If your Legal buyer needs audit defensibility and your Ops buyer needs workflow speed, those are not the same tier of the product. Bundle the audit-defensibility set for the Legal persona into a tier that can be sold and defended as such. Bundle the workflow-speed set for Ops into its own tier or add-on. Confusion is the enemy of willingness to pay. A roadmap that maps cleanly to bundles removes that confusion and gives sales two distinct stories to tell, one per buyer.

Which metrics tell you a multi-buyer roadmap governance is actually working?

Three signals. First, the share of deals stalling at the buyer-handoff should fall quarter over quarter. Second, net revenue retention should rise without churn masking it. Third, qualitative: a single item from the roadmap should be defensible in both buyers' languages without translation gymnastics. Leading indicators include cycle time from demo to signed contract and the ratio of renewal-blocker feedback across the two personas. If one buyer's blockers dominate six months in, the governance is drifting.

How long before dual-buyer roadmap governance shows up in deal cycles and renewals?

Two quarters is realistic for a mid-market product. Month one is discovery and buyer-value mapping. Month two is re-sequencing and publishing. Months three through six is the first full renewal cohort passing through the new sequence. You will see deal-cycle shifts earlier, often inside the first quarter, because sales gets a coherent story. Renewal lift takes longer because the first cohort under the new roadmap has to reach its anniversary. Do not measure renewal impact before month six.

Governing a product roadmap when two or more buyers inside the same account have different jobs and different economic power. A four-part framework for mapping both buyers, scoring every item against both value matrices, sequencing by unblock-value, and publishing to each buyer in their own language. Practical sprints, failure modes, and the uncomfortable truth operators rarely admit.

How relevant and useful is this article for you?

About the Author(s)

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

Emily Ellis is the Founder of FintastIQ. Emily has 20 years of experience leading pricing, value creation, and commercial transformation initiatives for PE portfolio companies and high-growth businesses. She has previous experience as a leader at McKinsey and BCG and is the Founder of FintastIQ and the Growth Operating System.

References

- Marty Cagan. Inspired. Wiley, 2018

- Teresa Torres. Continuous Discovery Habits. Product Talk, 2021

- Clayton M. Christensen, Taddy Hall, Karen Dillon & David Duncan. Competing Against Luck. HarperBusiness, 2016

- Eric Ries. The Lean Startup. Crown Business, 2011

- Clayton M. Christensen, Scott Cook & Taddy Hall. Marketing Malpractice: The Cause and the Cure. Harvard Business Review, 2005